Transfer Large Files With Kafka

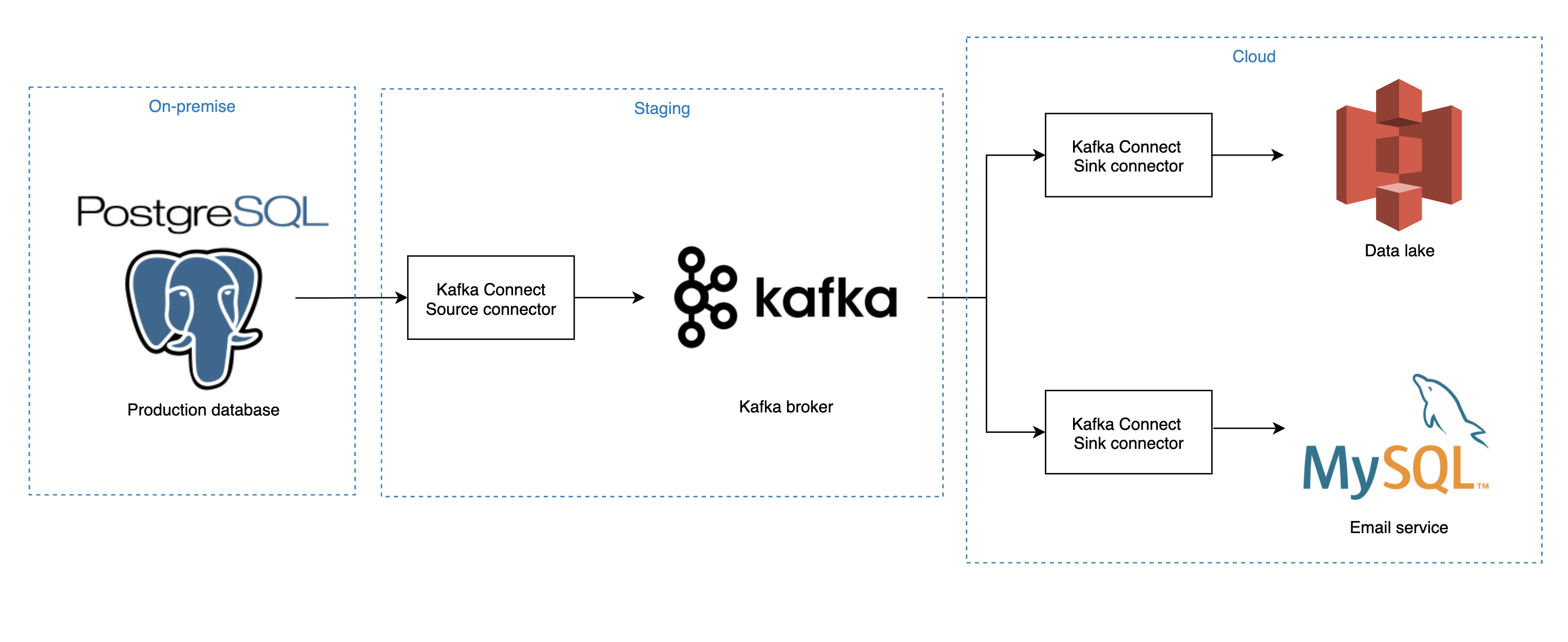

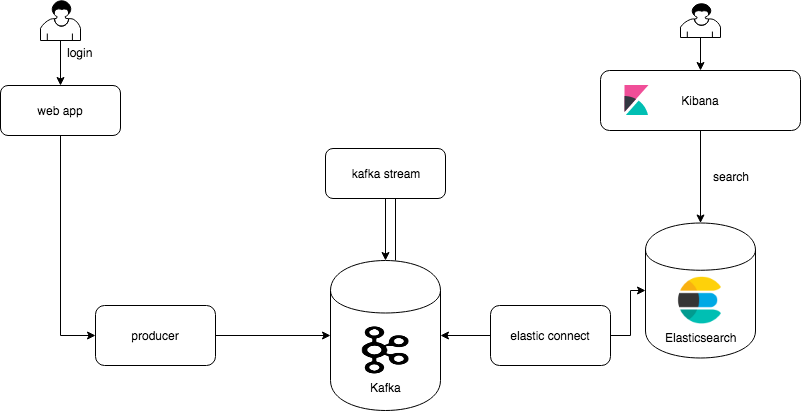

Kafka Connect is part of Apache Kafka and only requires a JSON fil.

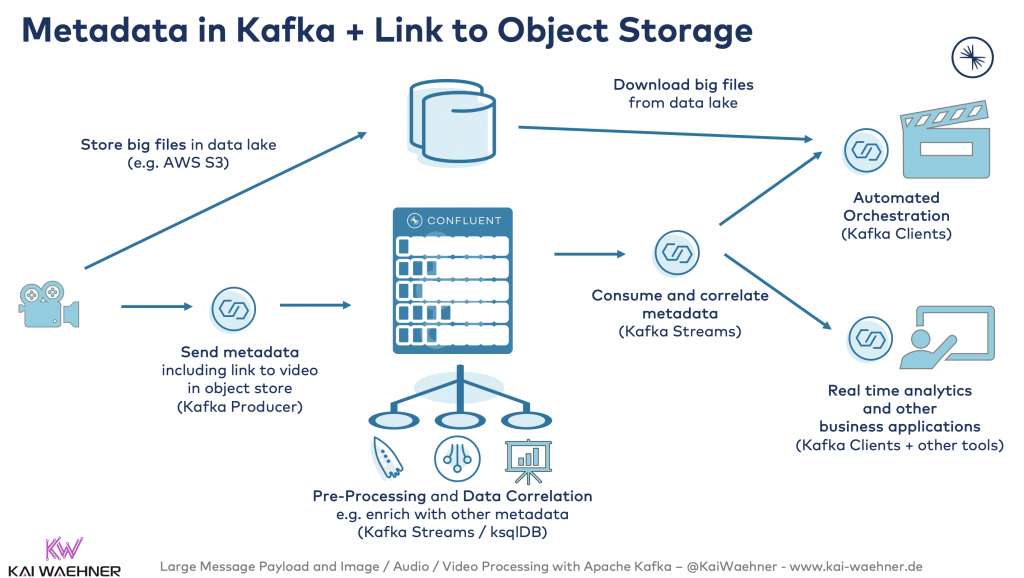

Transfer large files with kafka. If you want to do a batch processing of some huuuge files Kafka is the wrong tool to use. In both the client and the broker a 1GB chunk of memory will need to be allocated in JVM for every 1GB message. In her article Handling large messages in Kafka Gwen Shapira suggests several ways to deal with large messages and says that the optimal message size for Kafka is around 10k.

9202016 Kafka is not designed to move large messages and it is not the best choice for moving large binary files like photos. However before doing so Cloudera recommends that you try and reduce the size of messages first. Read more about compression in Apache Kafka here.

Kafkas strength is managing STREAMING data. With Redis the maximum payload. Even a 1GB file could be sent via Kafka but this is undoubtedly not what Kafka was designed for.

Sporadic large messages Option 1. Kafka can be tuned to handle large messages. Use Apache Kafka clients that are up to date.

WeTransfer is the simplest way to send your files around the world. Low end to end latency There are more round. It supports several formats of files.

Database replication Replicates a data store by using another data store. Many time while trying to send large messages over Kafka it errors out with an exception MessageSizeTooLargeException. Based on your description I am assuming that your use-case is bringing huge files to HDFS and process it afterwards.