Transfer Learning Freeze Layers

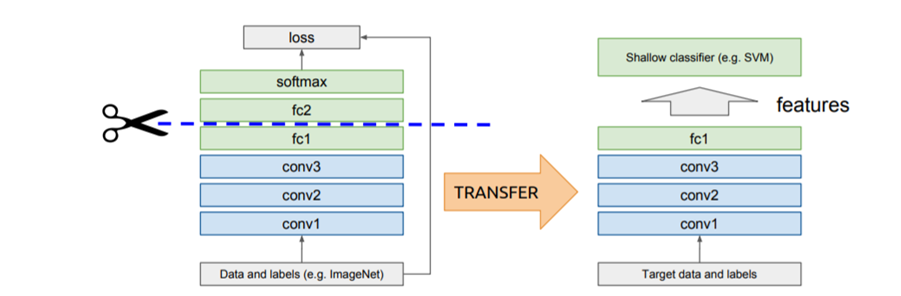

Freezing by setting layertrainable False prevents the weights in a given layer from being updated during training.

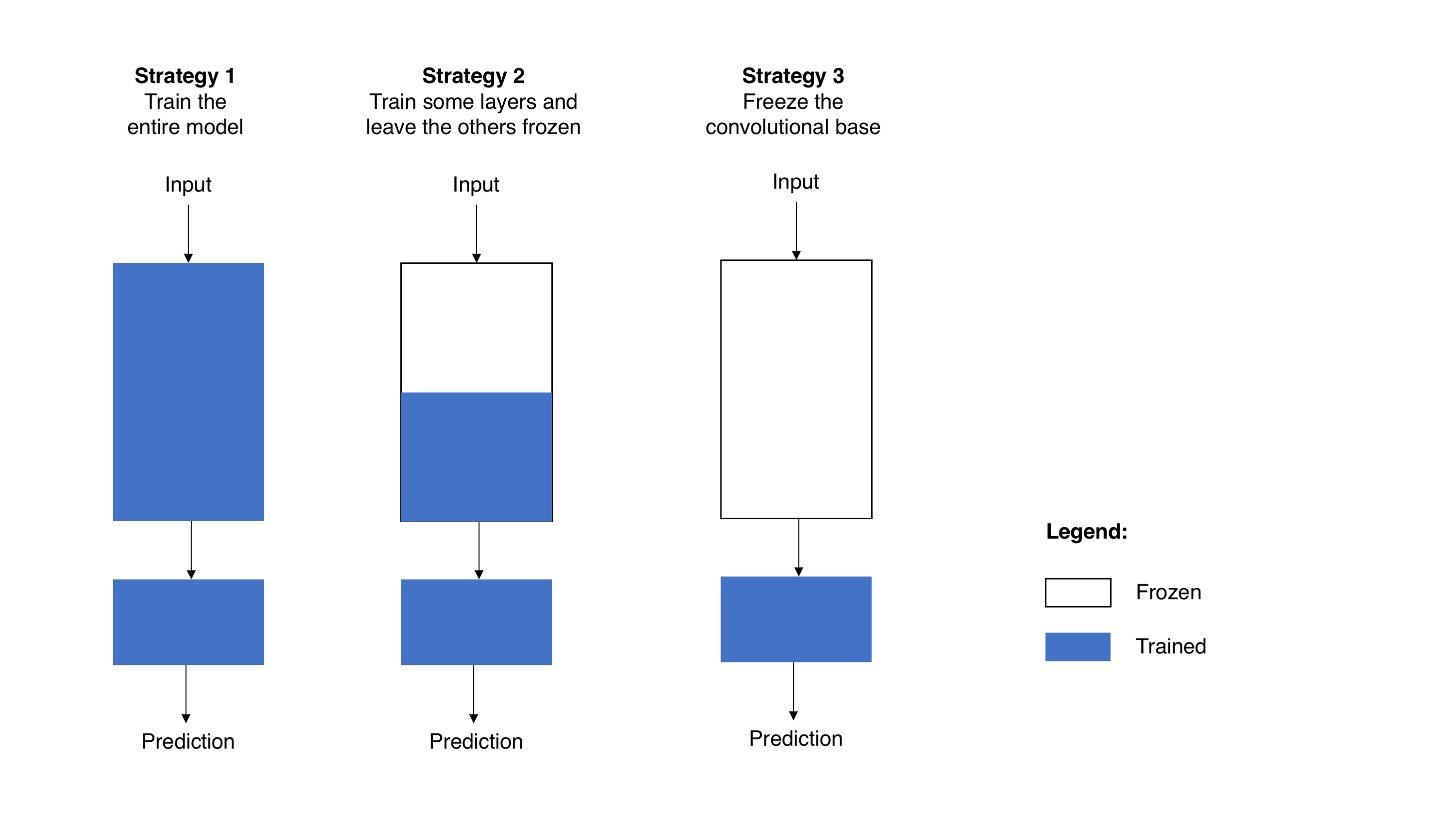

Transfer learning freeze layers. MobileNet V2 has many layers so setting the entire models trainable flag to False will freeze all of them. It is generally recommemded to train only outer layers during the first phase in transfer learning as those layers are data specific. 11152018 Transfer learning for Computer Vision.

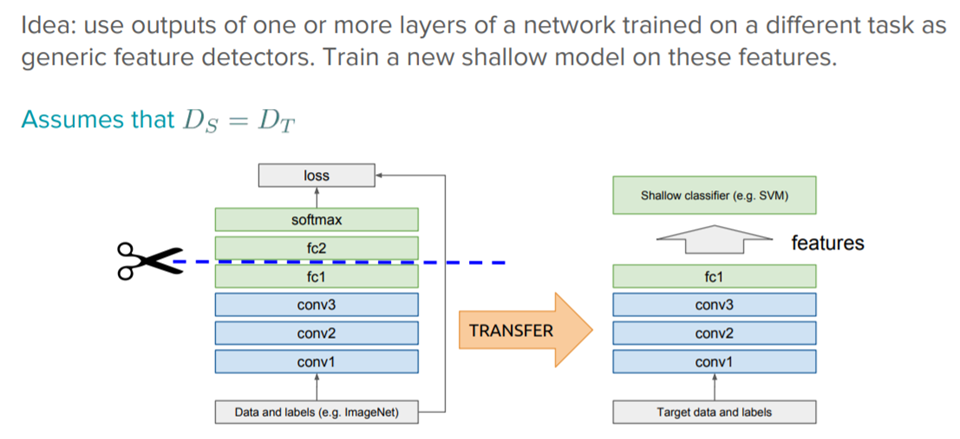

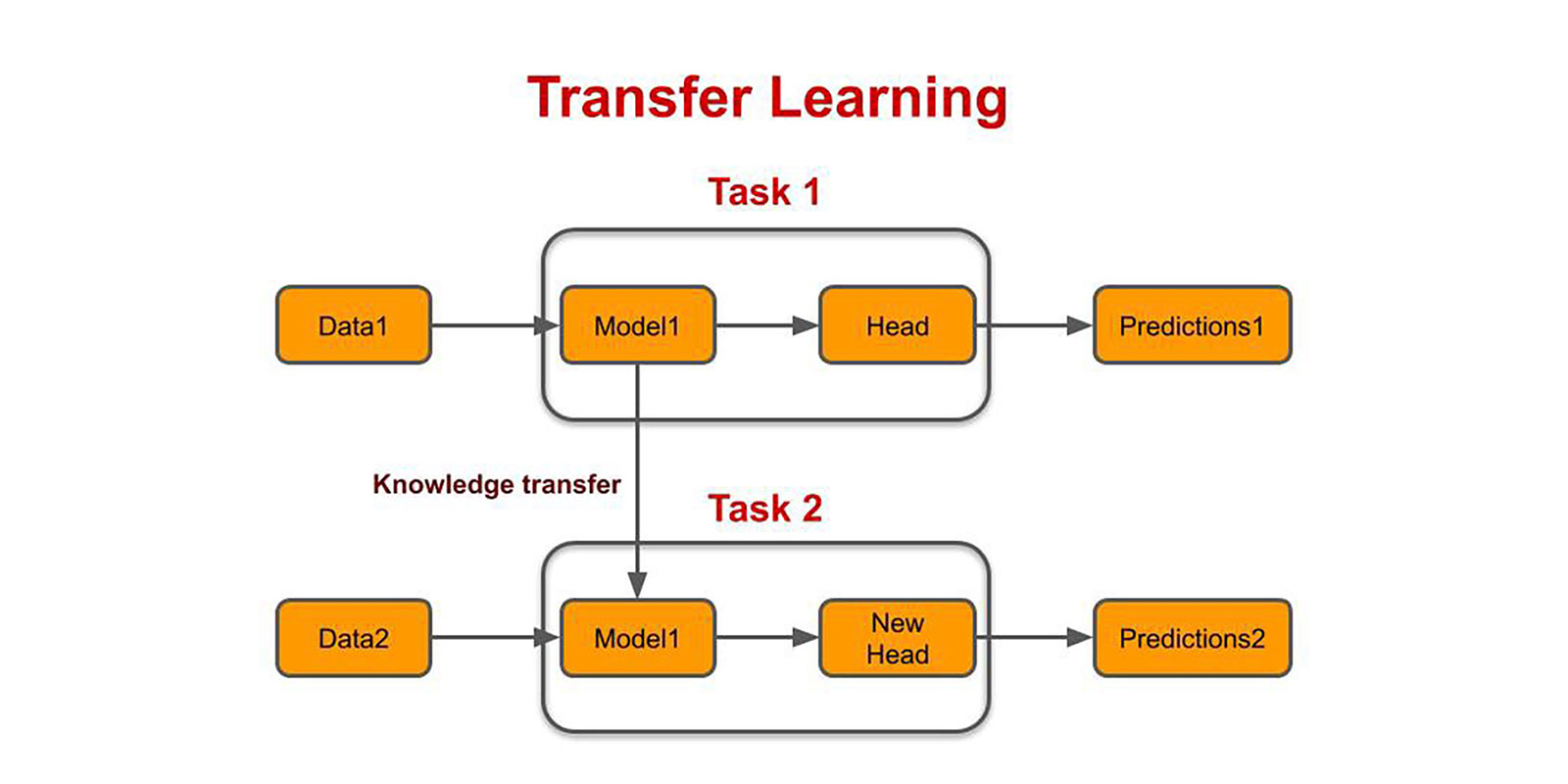

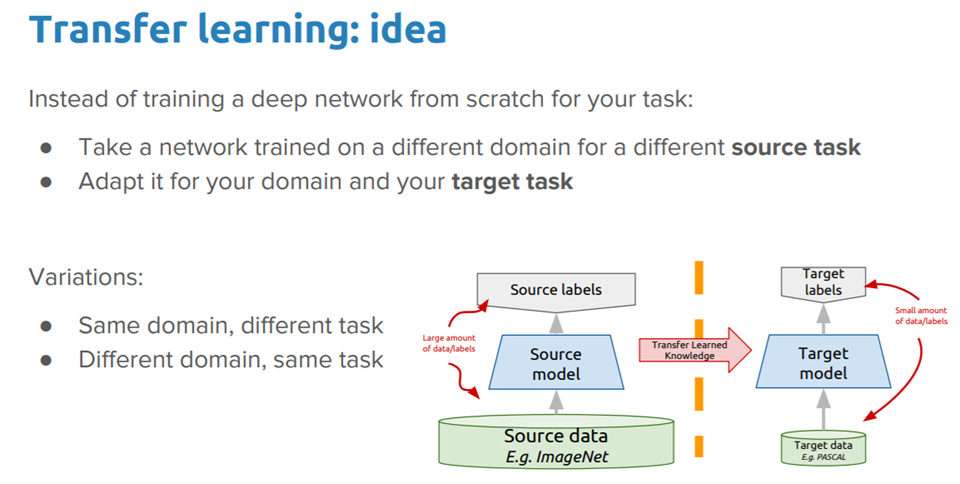

Typically in transfer learning we freeze most of the layers excpet top few layers and then fine tine top layers on new image dataset. Instead of random initializaion we initialize the network with a pretrained network like the one that is trained on imagenet 1000 dataset. 5272020 In earlier stabs at transfer learning I necessarily froze the entire convolutional base rendering it entirely untrainable.

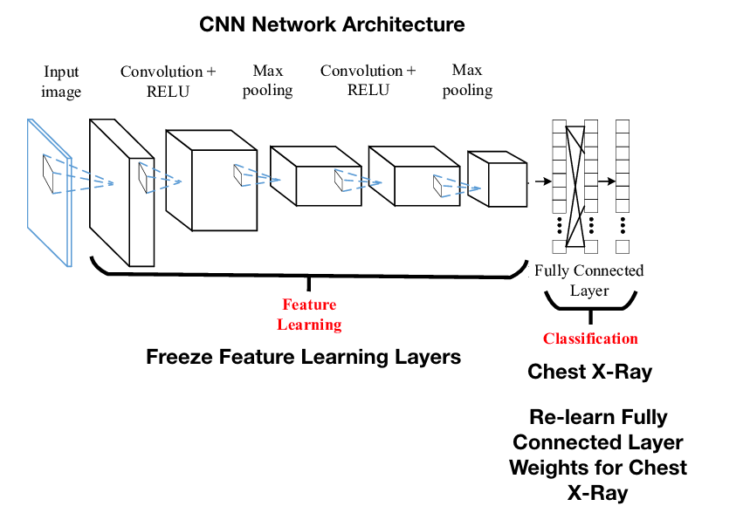

5252019 Freezing a layer too is a technique to accelerate neural network training by progressively freezing hidden layers. For object recognition with a CNN we freeze the early convolutional layers of the network and only train the last few layers which make a prediction. How does freezing affect the speed of the model.

Freezing a layer prevents its weights from being modified. How can I do that. For instance during transfer learning the first layer of the network are frozen while leaving the end layers open to modification.

The first one is a GlobalAveragePooling2D layer which takes the output of the backbone as the input. Instead part of the initial weights are frozen in place and the rest of the weights are used to compute loss and are updated by the optimizer. Deep learning has been quite successfully utilized for various computer vision tasks such as object recognition and identification using different CNN architectures.

Here we will freeze the weights for all of the. Rest of the training looks as usual. 1162020 This guide explains how to freeze YOLOv5 layers when transfer learning.