Transfer Learning Gpt2

What is a Language Model.

Transfer learning gpt2. 3262020 Now we can do a little transfer learning on GPT2 and get better results than we could have dreamed of a few years ago. From a general-purpose model you can create a more customized model based on the users input data. GPT2 Generative Pre-Training-2 Radford et al.

While LSTMs are commonly used for text-generation problems similar to the one we attempt to solve a few factors led us to our ultimate decision to use transfer learning with the GPT-2 transformer model. LSTM and GPT-2 Synthetic Speech Transfer Learning for Speaker Recognition to Overcome Data Scarcity. Kashgari is a production-level NLP Transfer learning framework built on top of tfkeras for text-labeling and text-classification includes Word2Vec BERT and GPT2 Language Embedding.

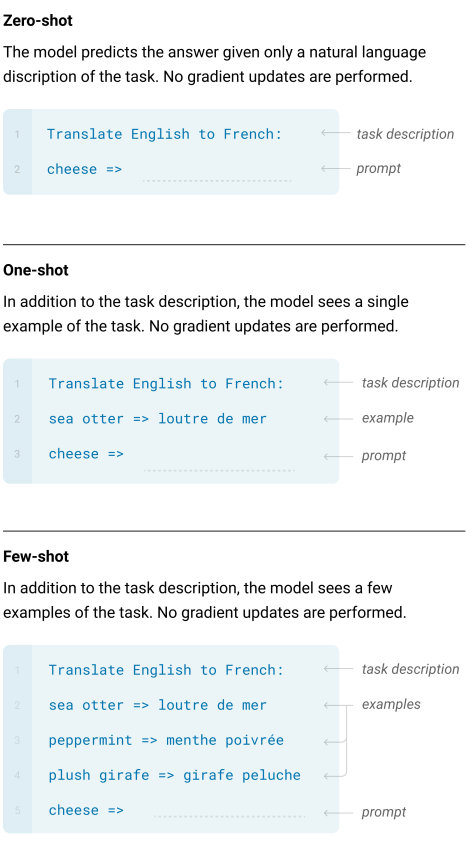

0 share. GPT-2 is an unsupervised language model trained on a large diverse corpus and used as is for downstream tasks without architecture change parameter updates and most importantly with no task specific labeled data. Max Woolf created an amazing library which makes it super easy to fine tune GPT2.

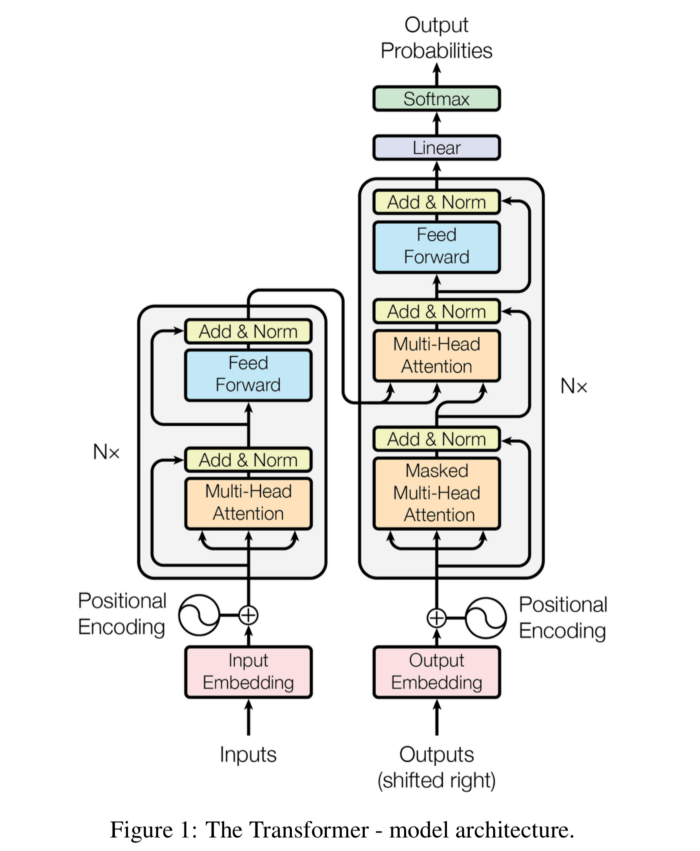

Tasks are the objective of the model. It largely follows the previous GPT architecture with some modifications. Examples of inputs and corresponding outputs from the T5 model from Googles 2019 paper Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer.

Are achieving state of the art results in a wide range of NLP tasks. All sentences are selected from Reddit. Were going to take all of his excellent work and use that interface for training.

5212019 If I want to train it with gpt2 I think the only parameter I should set is --model_checkpointgpt2 and first is there is no set_num_special_tokens method in modeling_gpt2 and I replaced it with set_tied in trainpy but I got some type errors. However I am unable to fine-tune GPT-2 medium on the same instance with the exact same hyper-parameters - Im getting out of memory issues presumably because GPT-2 medium is much. In speech recognition problems data scarcity often poses an issue due to the willingness of humans to provide large amounts of data for learning and classification.