Transfer Learning How Many Layers

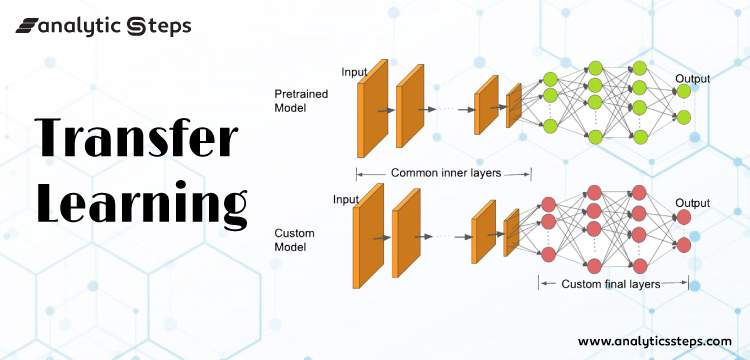

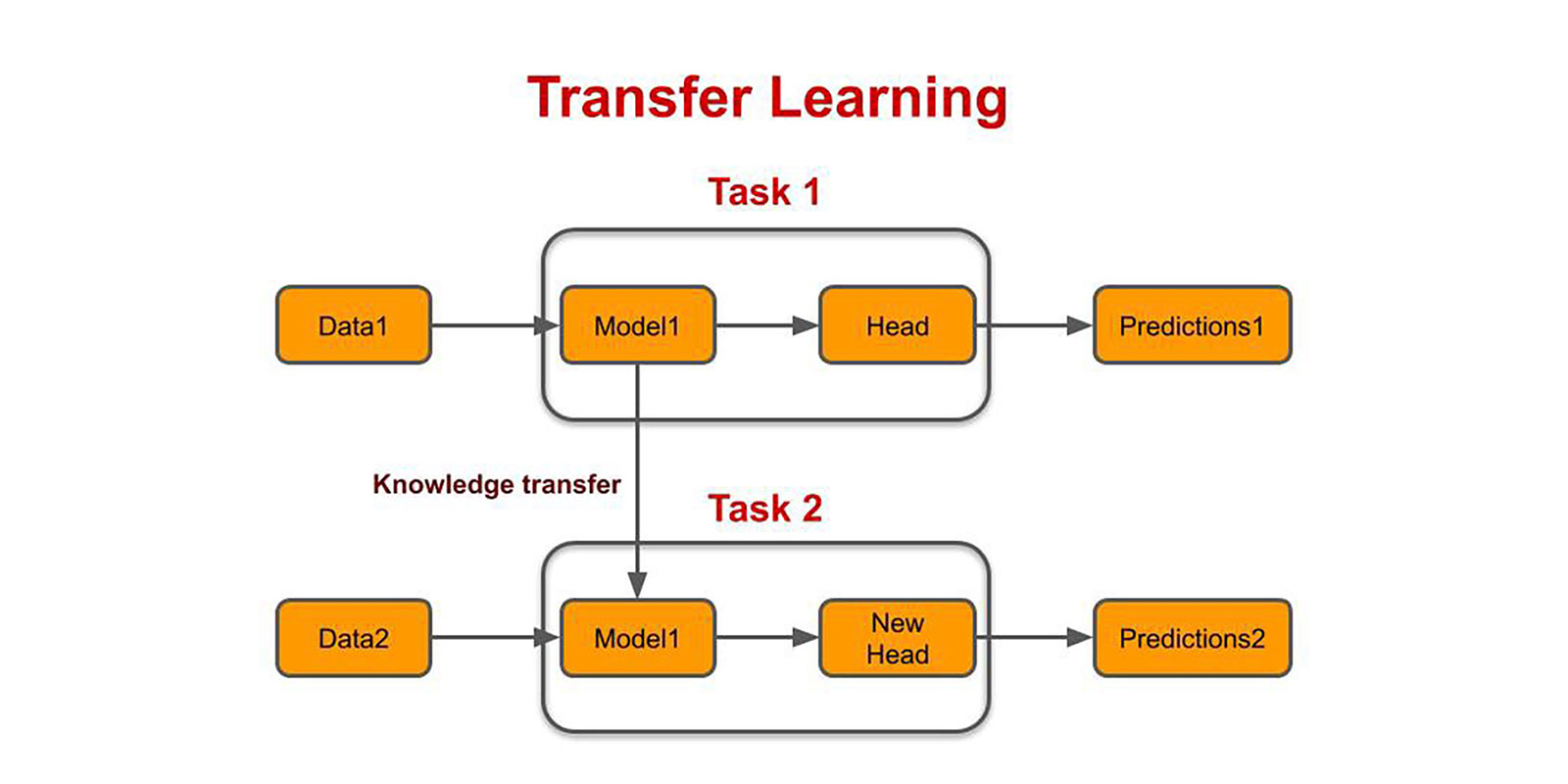

We call such a deep learning model a pre-trained model.

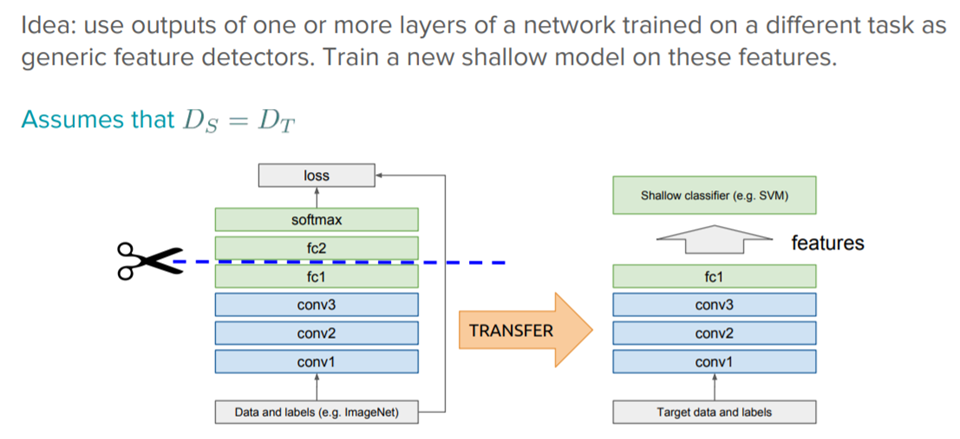

Transfer learning how many layers. Tl_cnn_model_2 load_model vgg19_65epochs_featext_method2h5 custom_objects specificityspecificity see total number of trainable. 512019 pretrainedFolder fullfile tempdirpretrainedNetwork. If the dataset that is being trained on is small it is a better practice to hold the majority of the layers as they are and train just the final few layers.

Load saved model. Layertrainable False. For layer in conv_baselayers-13.

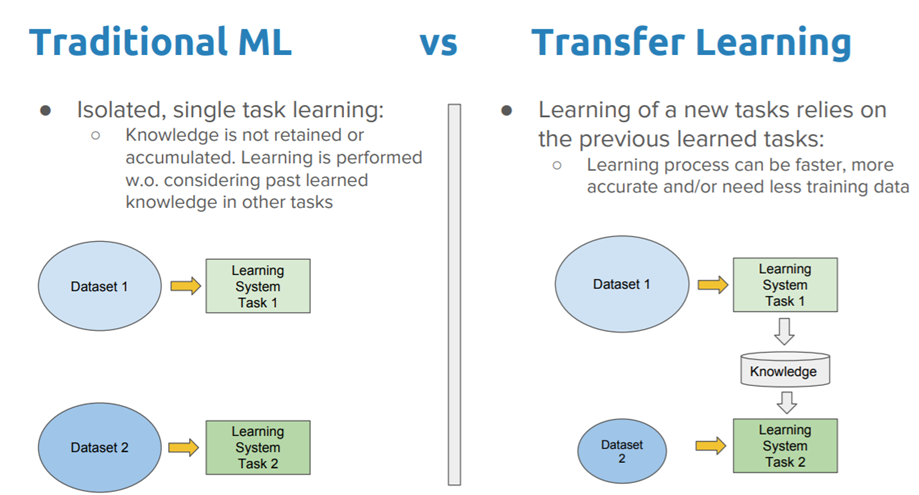

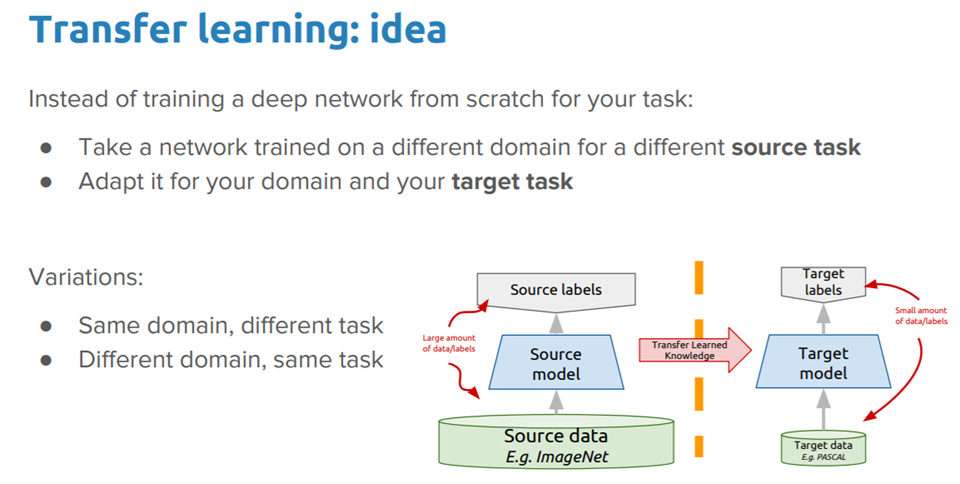

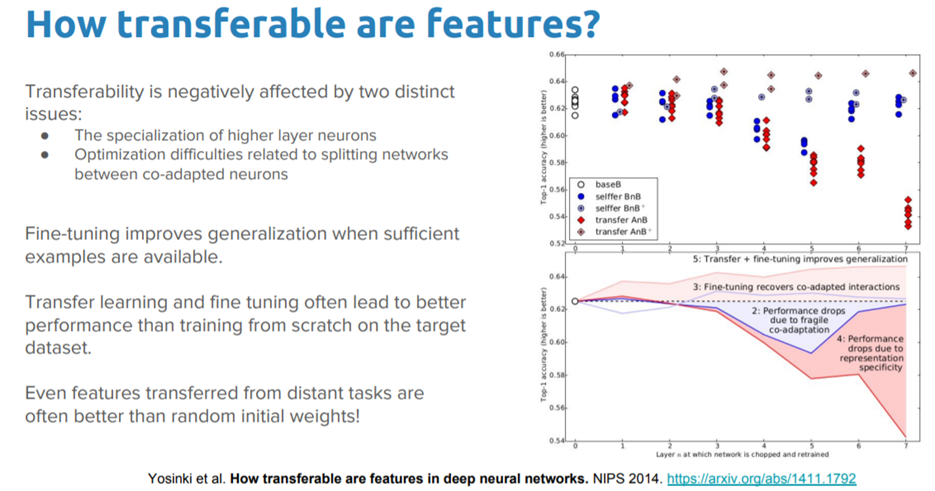

5272020 Turns out it was all 36 of them. This is to prevent the network from overfitting. 7202020 Transfer learning is a technique where a deep learning model trained on a large dataset is used to perform similar tasks on another dataset.

The number of layers to train represents a parameter you can experiment with yourselves. In deep learning transfer learning is a technique whereby a neural network model is first trained on a problem similar to the problem that is being solved. Data load pretrainedNetwork.

Lenbase_modellayers Fine-tune from this layer onwards fine_tune_at 100 Freeze all the layers before the fine_tune_at layer for layer in base_modellayersfine_tune_at. Instantiate a base model and load pre-trained weights into it. In this application the network accepts a 3-D image width height and depth for the color.

Just for completeness here is the code in preparing the datasets for images that contain a dog or cat. The number of layers in the network that should be unfrozen and retrained should scale in accordance with the size of the new dataset. Freeze all layers in the base model by setting trainable False.