Transfer Learning How Many Layers To Freeze

3192021 The 25M parameters in MobileNet are frozen but there are 12K trainable parameters in the Dense layer.

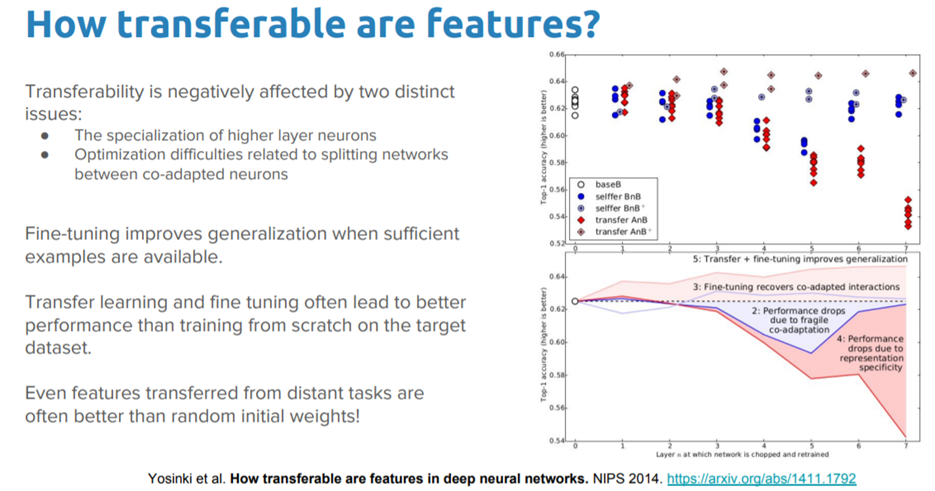

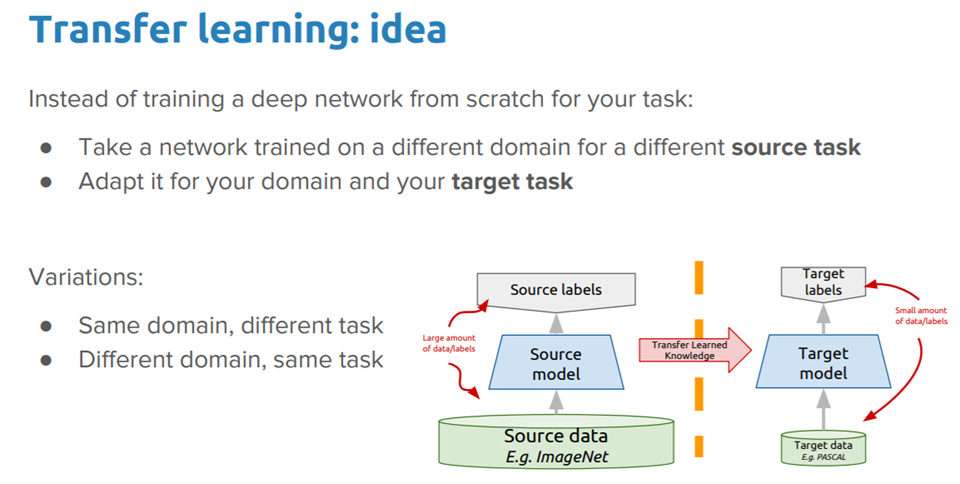

Transfer learning how many layers to freeze. If underfit unfreeze a half of the fourth layer. Remember - the deeper into the network -. However I notice there is no layer freezing of layers as is recommended in a keras blog.

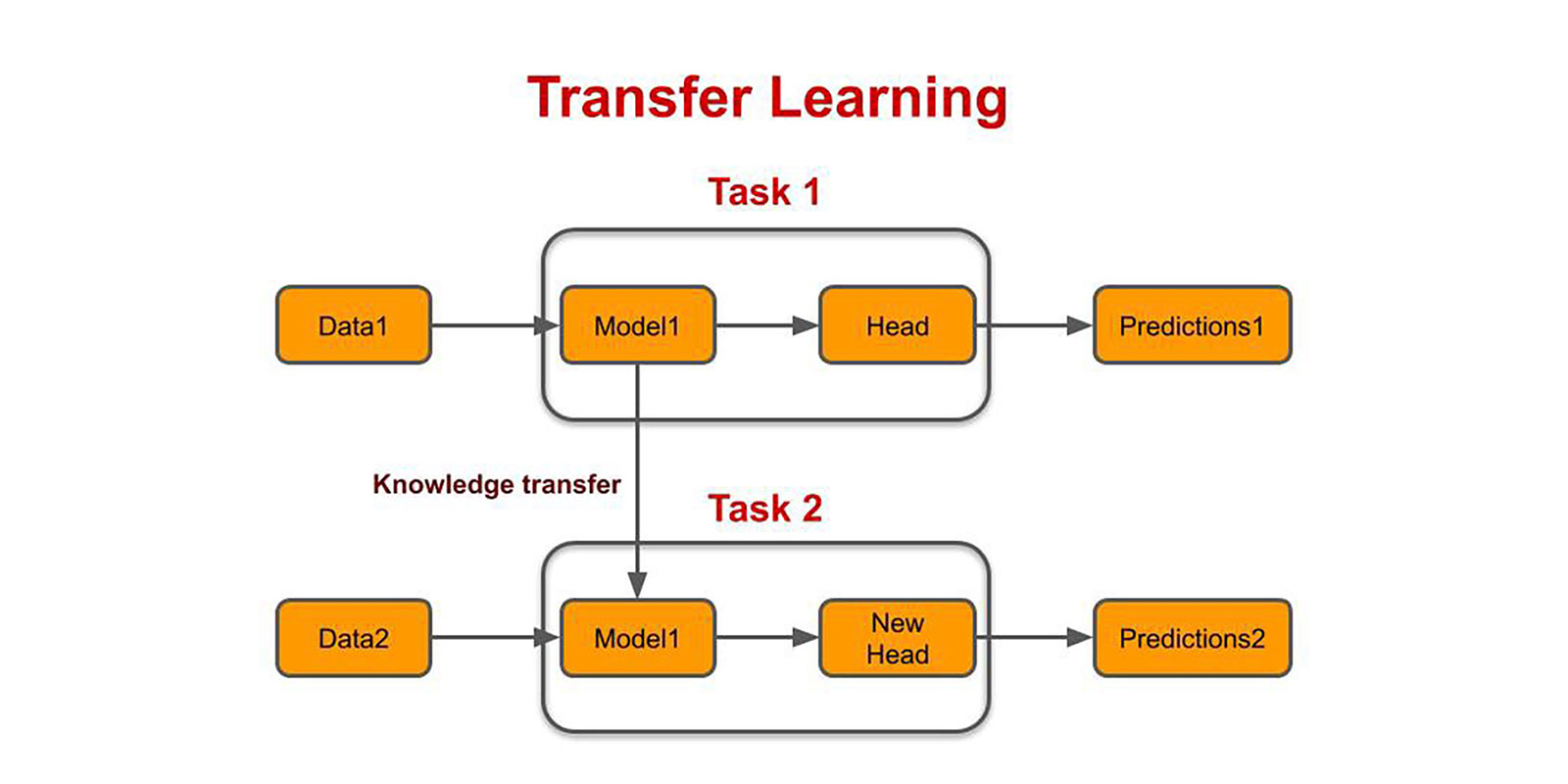

Typically in transfer learning we freeze most of the layers excpet top few layers and then fine tine top layers on new image dataset. Freeze all layers in the base model by setting trainable False. 5252019 For instance during transfer learning the first layer of the network are frozen while leaving the end layers open to modification.

Read this blog for more details. Extract all layers except the last three from the pretrained network. AModifying only the last layer keeping other frozen The final layer of VGG16 does a softmax regression on the 1000 classes in ImageNet.

The model uses pretrained VGG16 weights via imagenet for transfer learning. Use the supporting function freezeWeights to set the learning rates to zero in the first 10 layers. The new layer graph contains the same layers but with the learning rates of the earlier layers set to zero.

11152018 You can clearly see that we have a total of 13 convolution layers using 3 x 3 convolution filters along with max pooling layers for downsampling and a total of two fully connected hidden layers of 4096 units in each layer followed by a dense layer of 1000 units where each unit represents one of the image categories in the ImageNet database. Freeze all but the penultimate layer and re-train the last Dense layer. These are divided between two tfVariable objects the weights and biases.

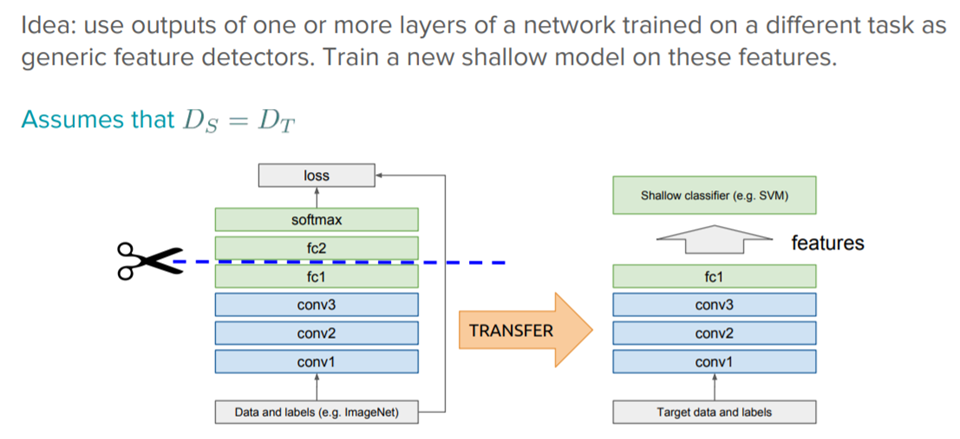

Layer freezing is process of freezing the weights of specific layer in deep learning network so that these wights dont change during training. This means that if a machine learning model is tasked with object detection putting an image through it during the first epoch and doing the same image through it again during the second epoch result in the same value through that layer. Create a new model on top of the output of one or several layers.