Transfer Learning Huggingface

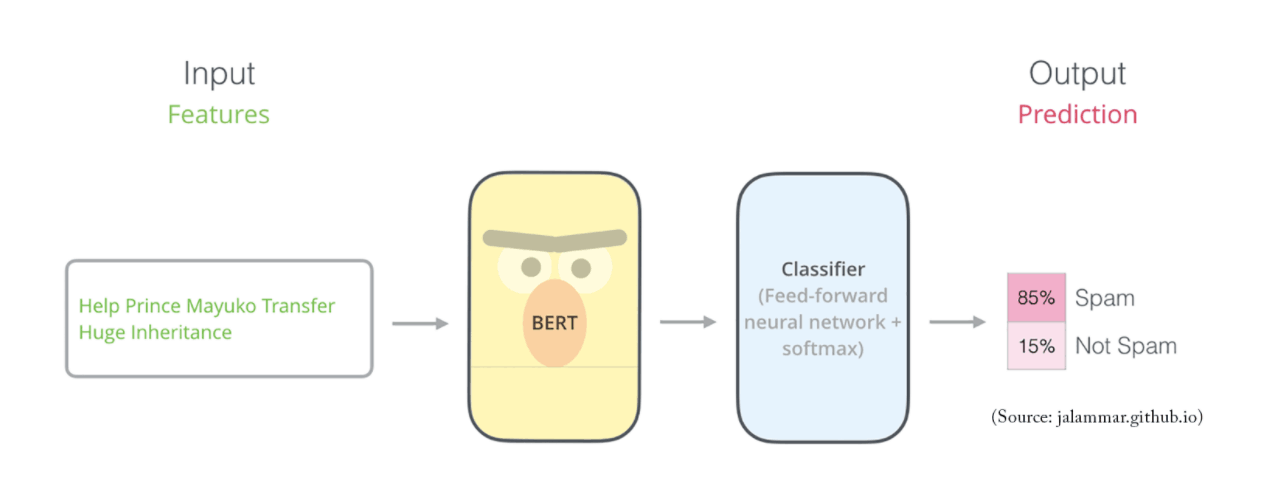

5112020 Sentence Correctness classifier using Transfer Learning with Huggingface BERT.

Transfer learning huggingface. How to build a State-of-the-Art Conversational AI with Transfer Learning. 10212019 To install and use the training and inference scripts please clone the repo and install the requirements. 7202020 Transfer Learning in NLP.

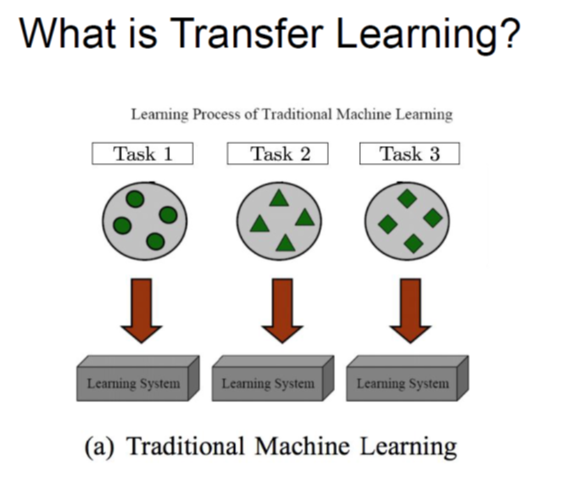

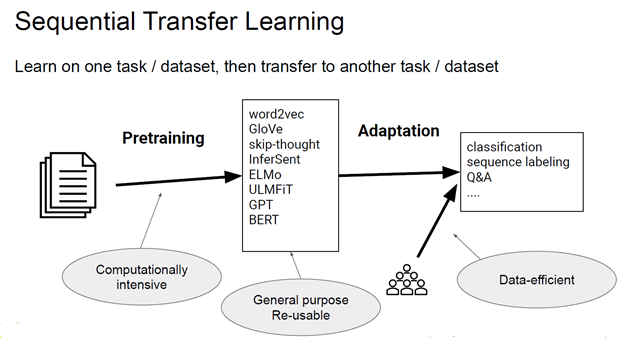

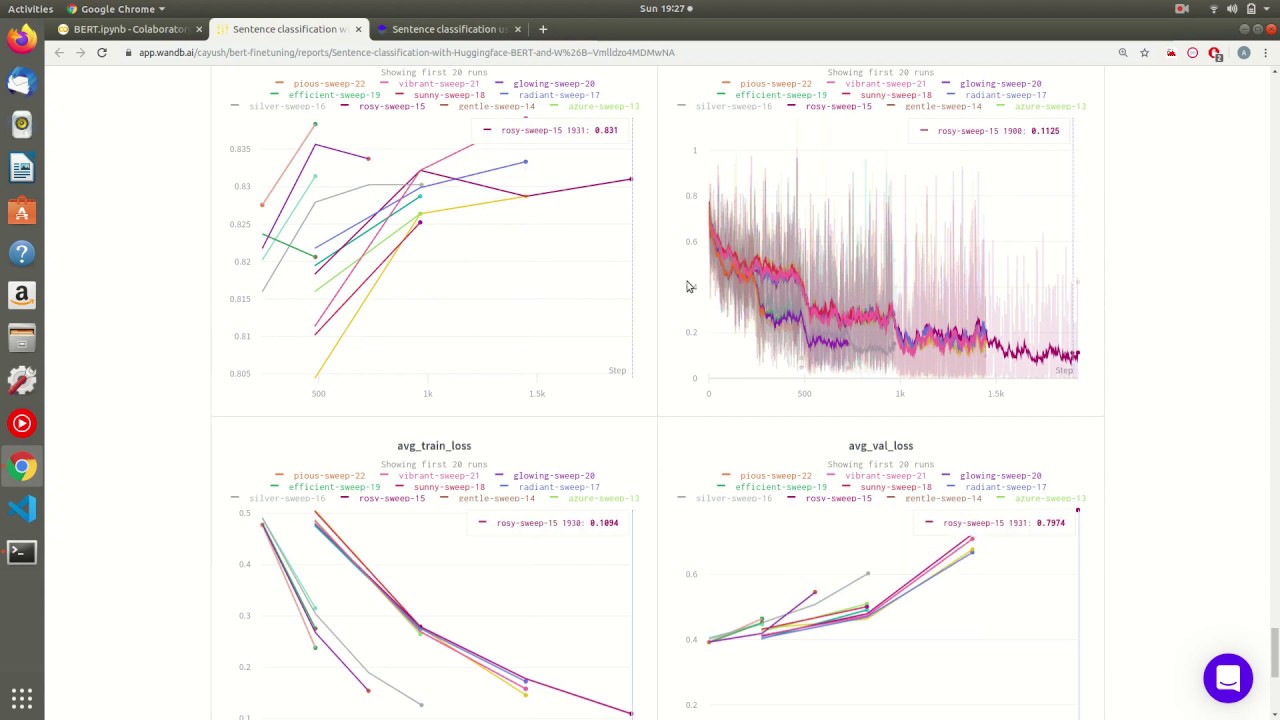

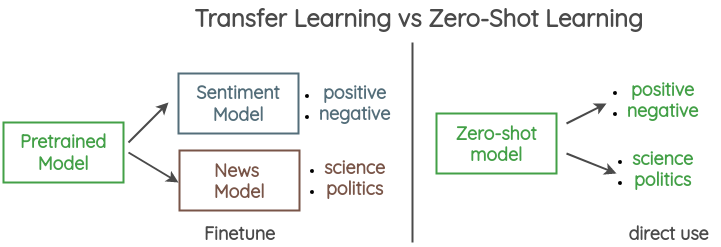

In this tutorial well do transfer learning for NLP in 3 steps. 4192020 An Introduction to Transfer Learning and HuggingFace by Thomas Wolf Chief Science Officer HuggingFace Transfer learning is a technique which consists to train a machine learning model for a task and use the knowledge gained in it to another different but related task. 592019 Our secret sauce was a large-scale pre-trained language model OpenAI GPT combined with a Transfer Learning fine-tuning technique.

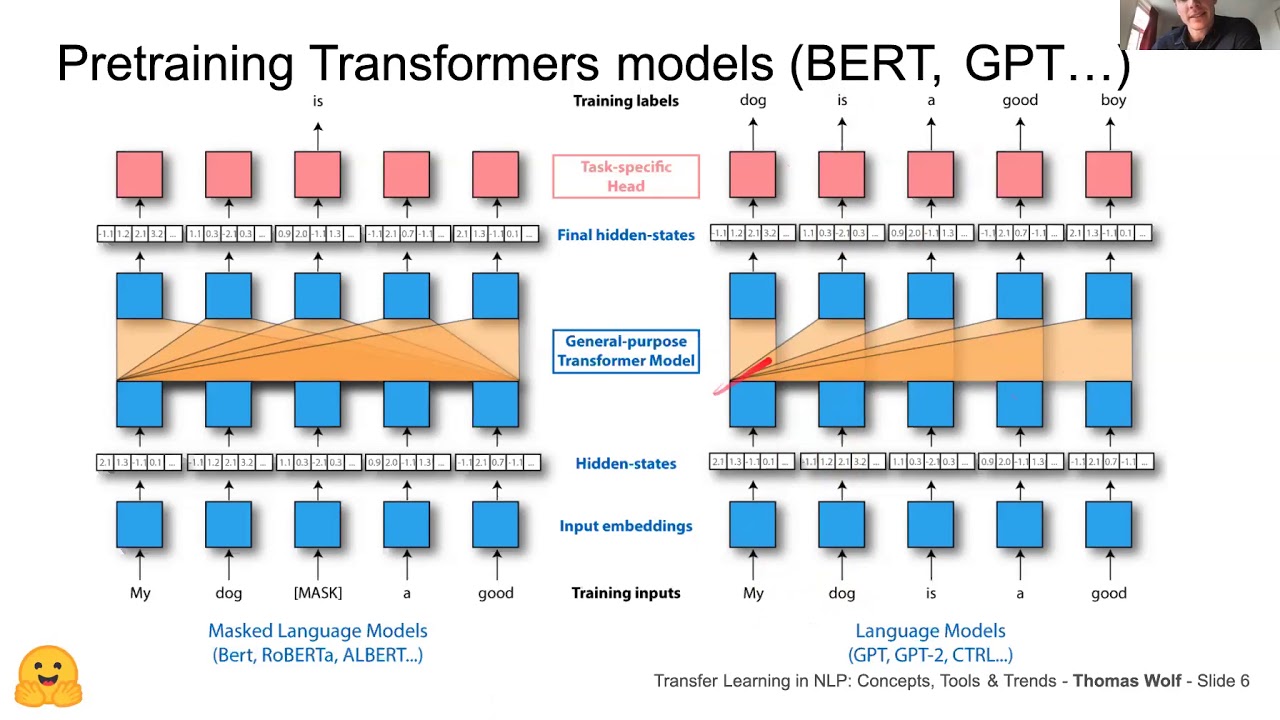

12102020 In this talk Ill start by introducing the recent breakthroughs in NLP that resulted from the combination of Transfer Learning schemes and Transformer architectures. The implementation by Huggingface offers a lot of nice features and abstracts away details behind a beautiful API. An Introduction to Transfer Learning and HuggingFace - YouTube.

The model was trained on a single TPU Pod V3-8 for 240000 steps in total using sequence length 512 batch size 4096. Well create a LightningModule which finetunes using features extracted by BERT. Then well learn to use the open-source tools released by HuggingFace like the Transformers and Tokenizers libraries and the distilled models.

The second part of the talk will be dedicated to an introduction of the open-source tools released by HuggingFace in particular our Transformers Tokenizers and Datasets libraries. However the popularity of transfer learning based methods in areas such as NLP and computer vision have not yet been effectively developed in computational chemistry machine learning. Well train the BertMNLIFinetuner using the Lighting Trainer.

In this session we will start by introducing the recent breakthroughs in NLP that resulted from the combination of Transfer Learning and Transformer architectures. It has a total of approximately 220M parameters and was trained using the encoder-decoder architecture. An Introduction to Transfer Learning and HuggingFace.