Transfer Learning Layers

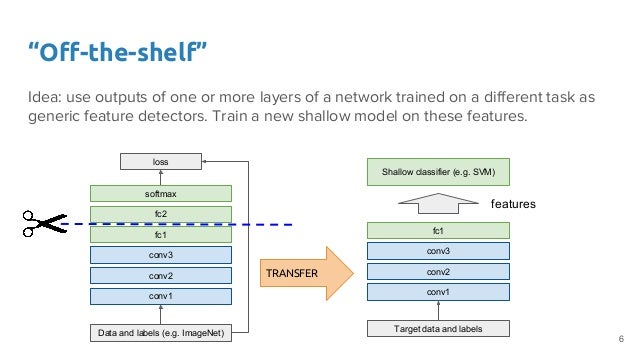

5202019 Transfer learning via feature extraction.

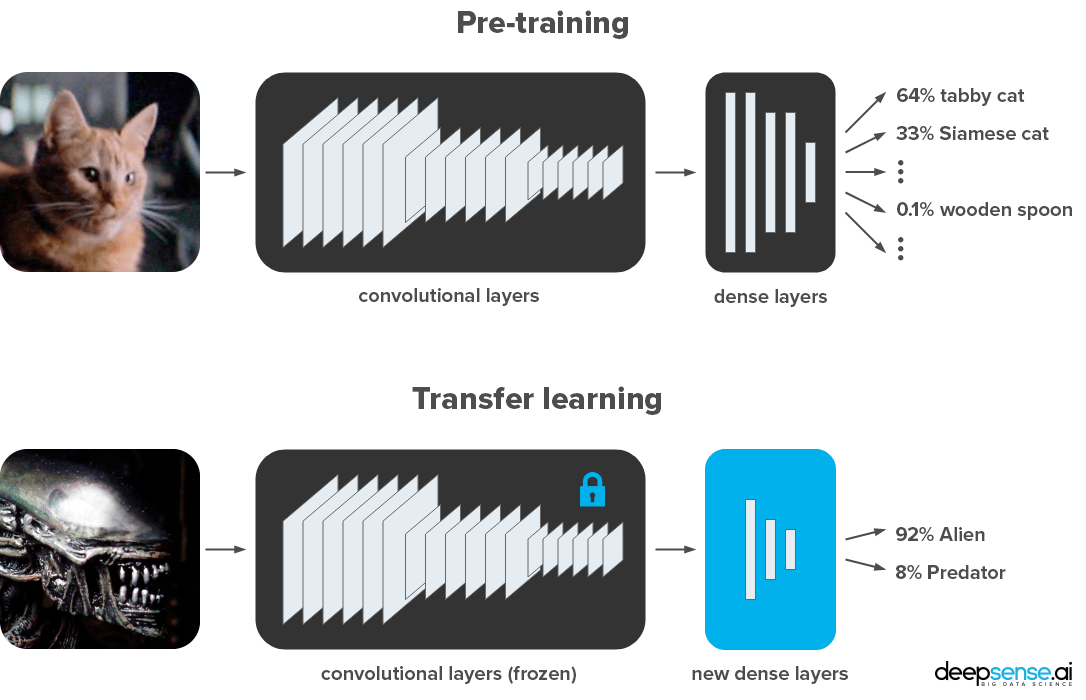

Transfer learning layers. To slow down learning in the transferred layers set the initial learning rate to a small value. The new layers he is referring to are ones that are added to replace the original output layer. Model Training with GradientTape.

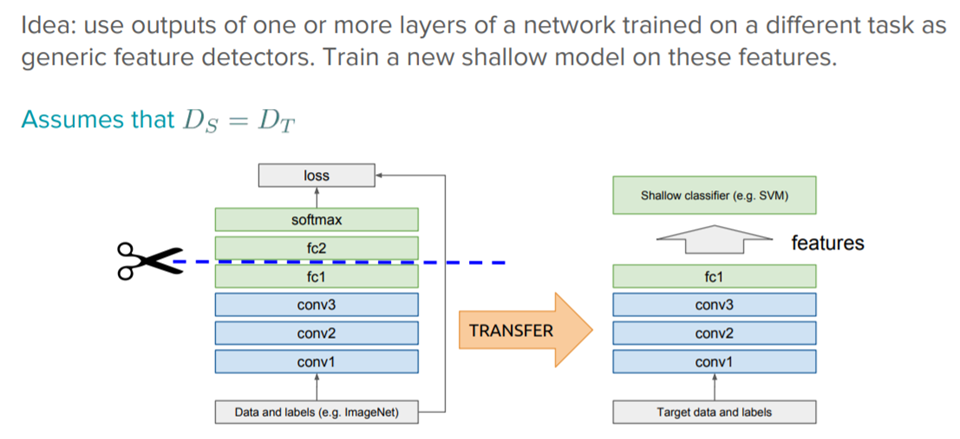

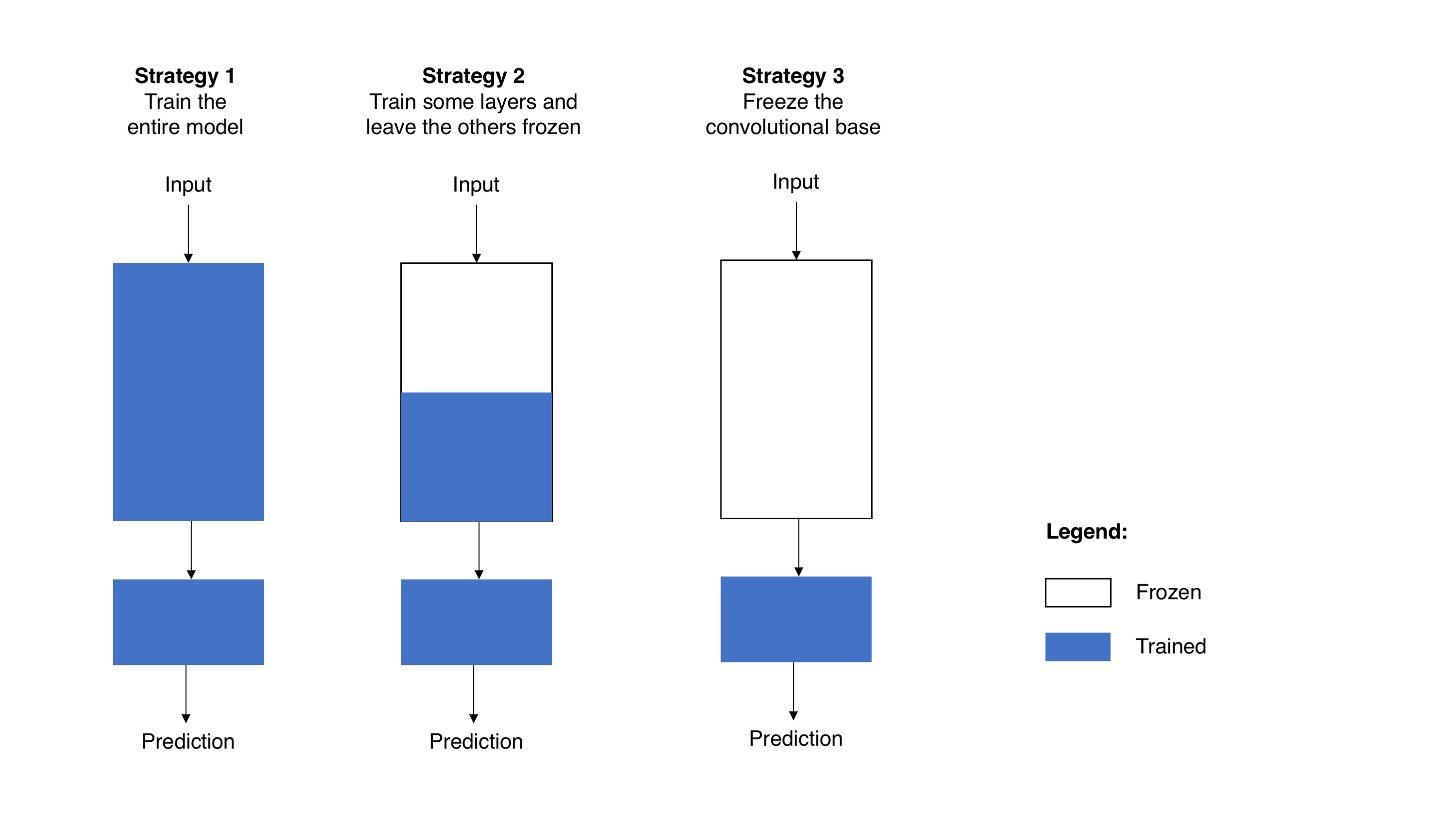

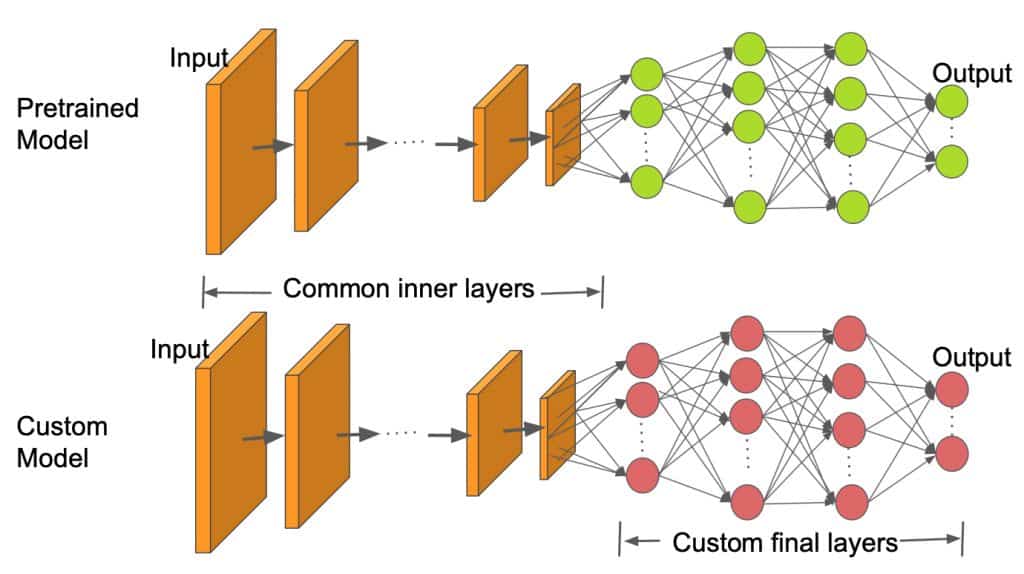

Using only some of the pre-trained model and training the rest of the model is referred to as fine-tuning. 3182019 The idea of transfer learning is inherent in the fact that neural networks are layer-wise self-contained that is you can remove all layers after a particular layer bolt. Layersitrainable False x layersix Final touch result_model Modelinputlayer.

Take layers from a previously trained model. If you are going to fine tune the model do not forget to mark other layers as un-trainable x new_conv for i in range3 lenlayers. You can then take advantage of these learned feature maps without having to start from scratch by training a large model on a large dataset.

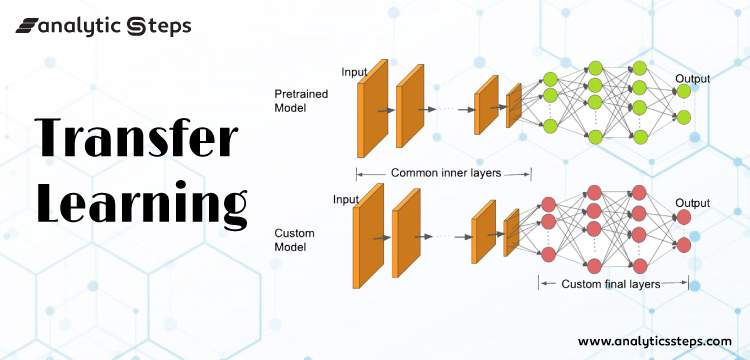

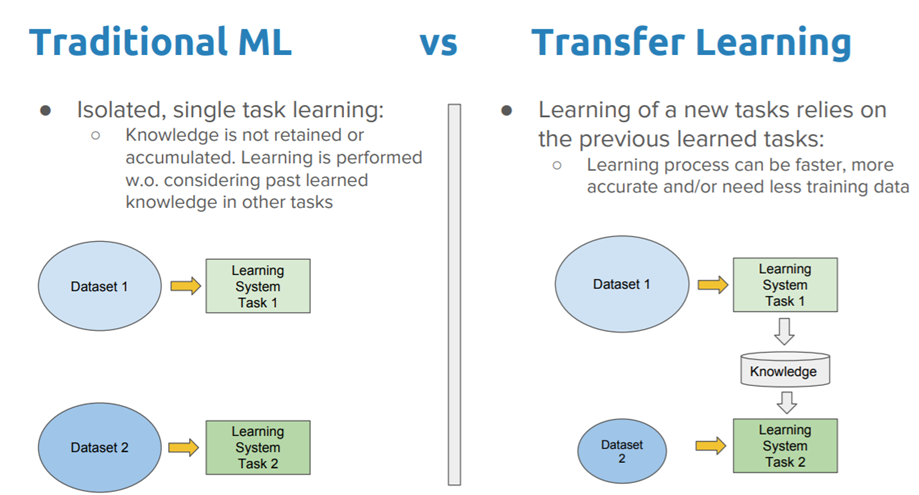

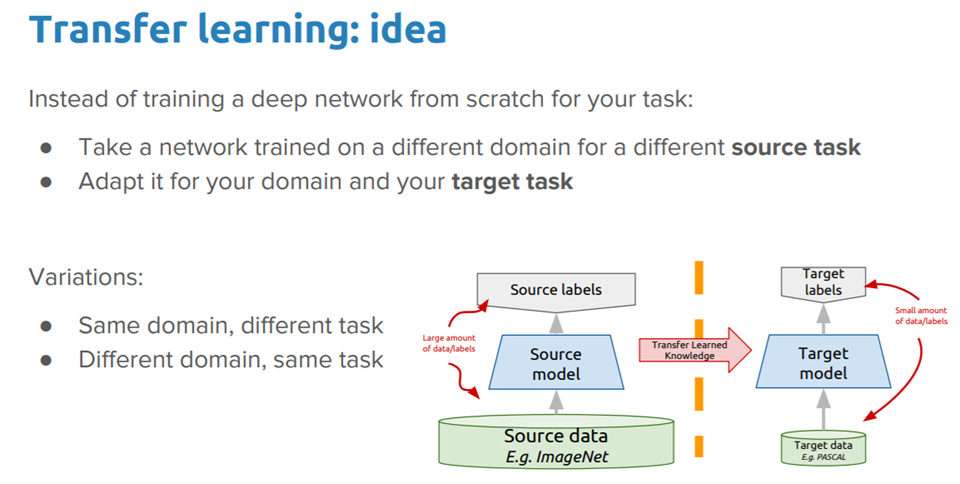

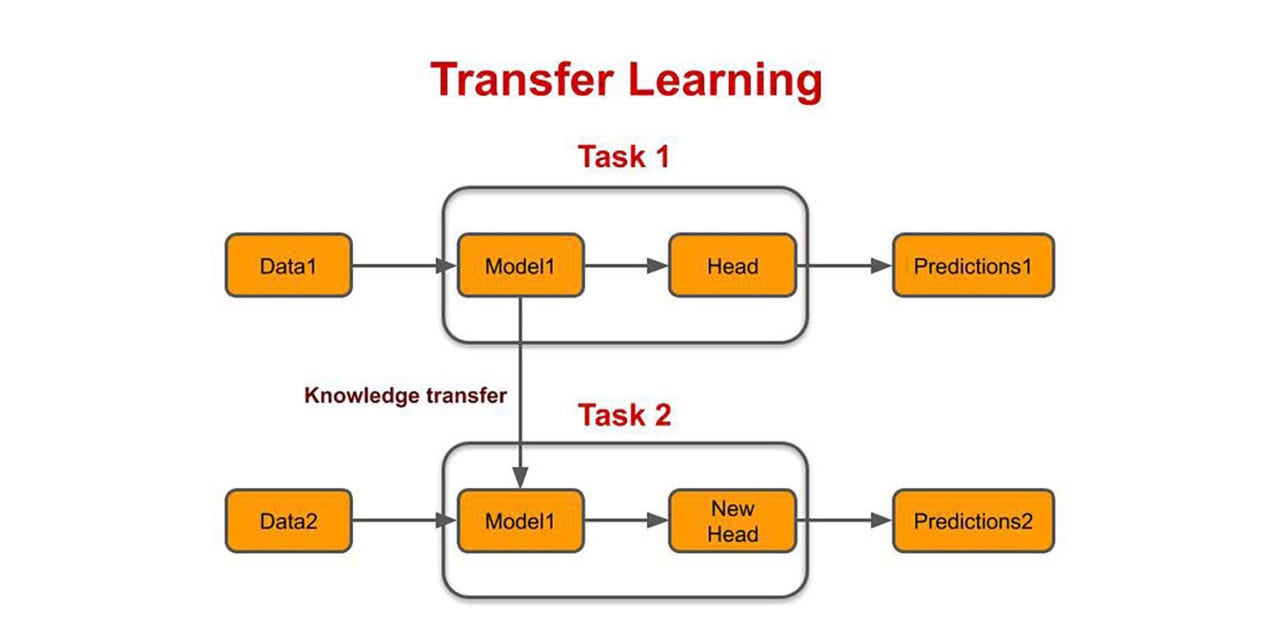

When conducting transfer learning the entire model architecture and weights can be used for the task at hand or just certain portionslayers of the model can be used. Transfer learning is a machine learning technique where a model trained on one task is re-purposed on a second related task. One or more layers from the trained model are then used in a new model trained on the problem of interest.

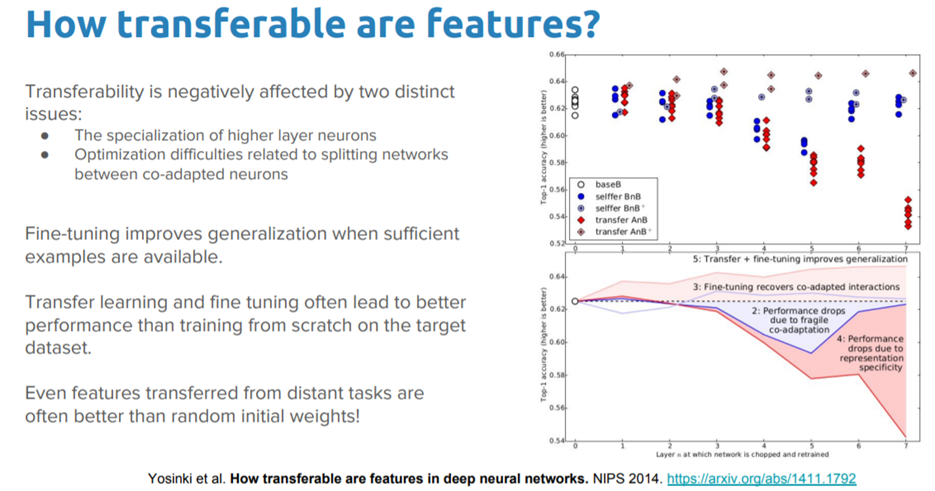

Transfer learning and domain adaptation refer to the situation where what has been learned in one setting is exploited to improve generalization in another setting Page 526 Deep Learning 2016. Andrew Ng says regarding transfer learning. In transfer learning a machine exploits the knowledge gained from a previous task to improve generalization about another.

Hence we are training only a few dense. New_conv Conv2Dfilters64 kernel_size5 5 namenew_conv paddingsamelayers0output Now stack everything back Note. The disadvantage of using transfer learning is that it cannot be layered to reduce the number of parameters.