Transfer Learning Using Xgboost

Considering your stack is very complex either trained on multiple small pieces of different data or you have tens of different XGBoost.

Transfer learning using xgboost. Boosting is a sequential process. XGBoost is basically designed to enhance the performance and speed of a Machine Learning model. Set up environment variables for your project ID your Cloud Storage bucket the Cloud Storage path to the training data and your algorithm selection.

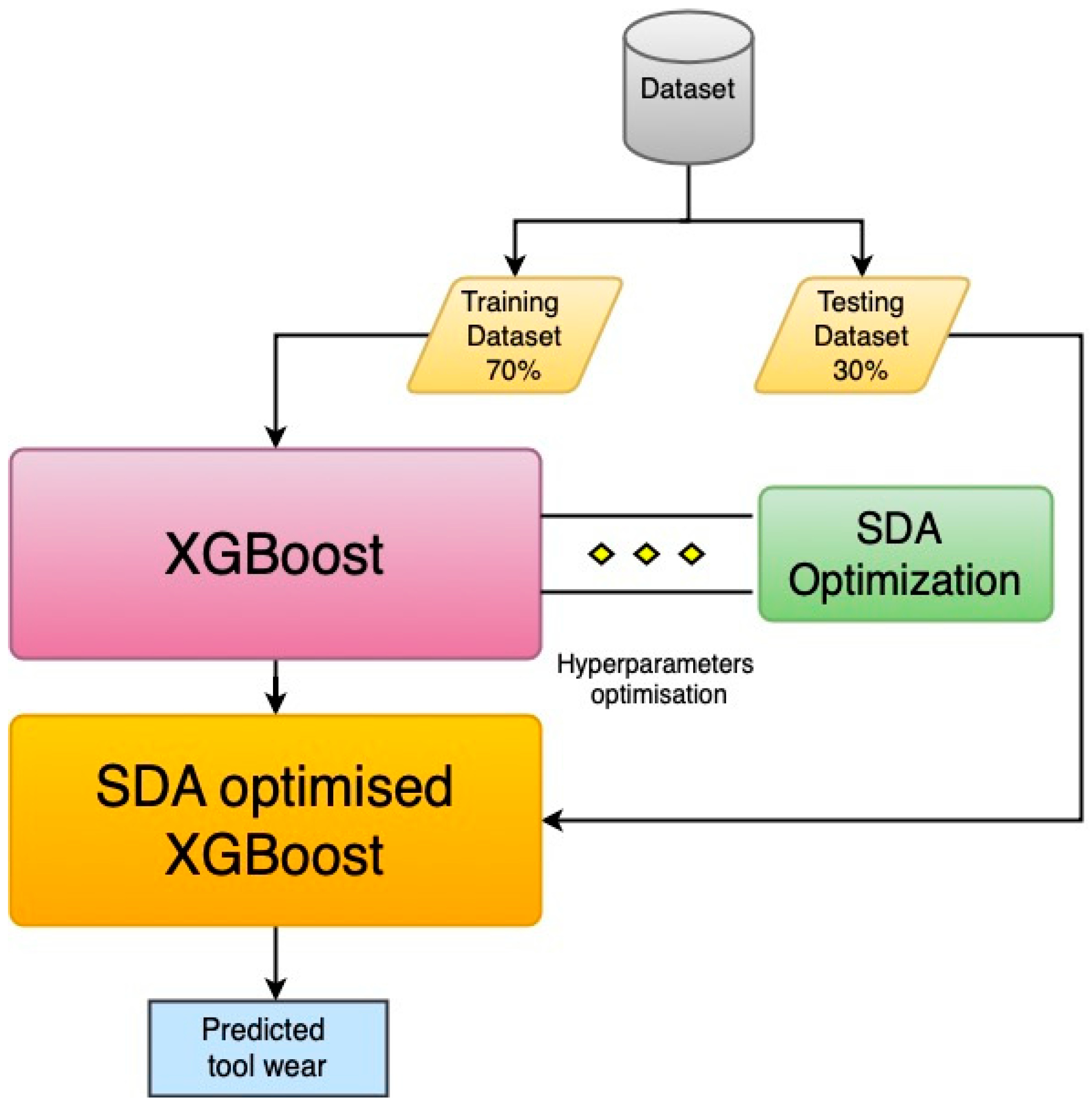

1212018 I train the base model on generic historical data and then use transfer learning for the fine tuning on specific live data. The XGBoost model can be used to predict sublayer water absorption for target well after training with transferred dataset. Transfer learning model provide a tool to allocate sublayer water absorption for injectors without water injection profile.

Transfer Learning and XGBOOST Kaggle. A Complete Guide to XGBoost Model in Python using scikit-learn. AI Platform Training built-in algorithms are in Docker containers hosted in Container Registry.

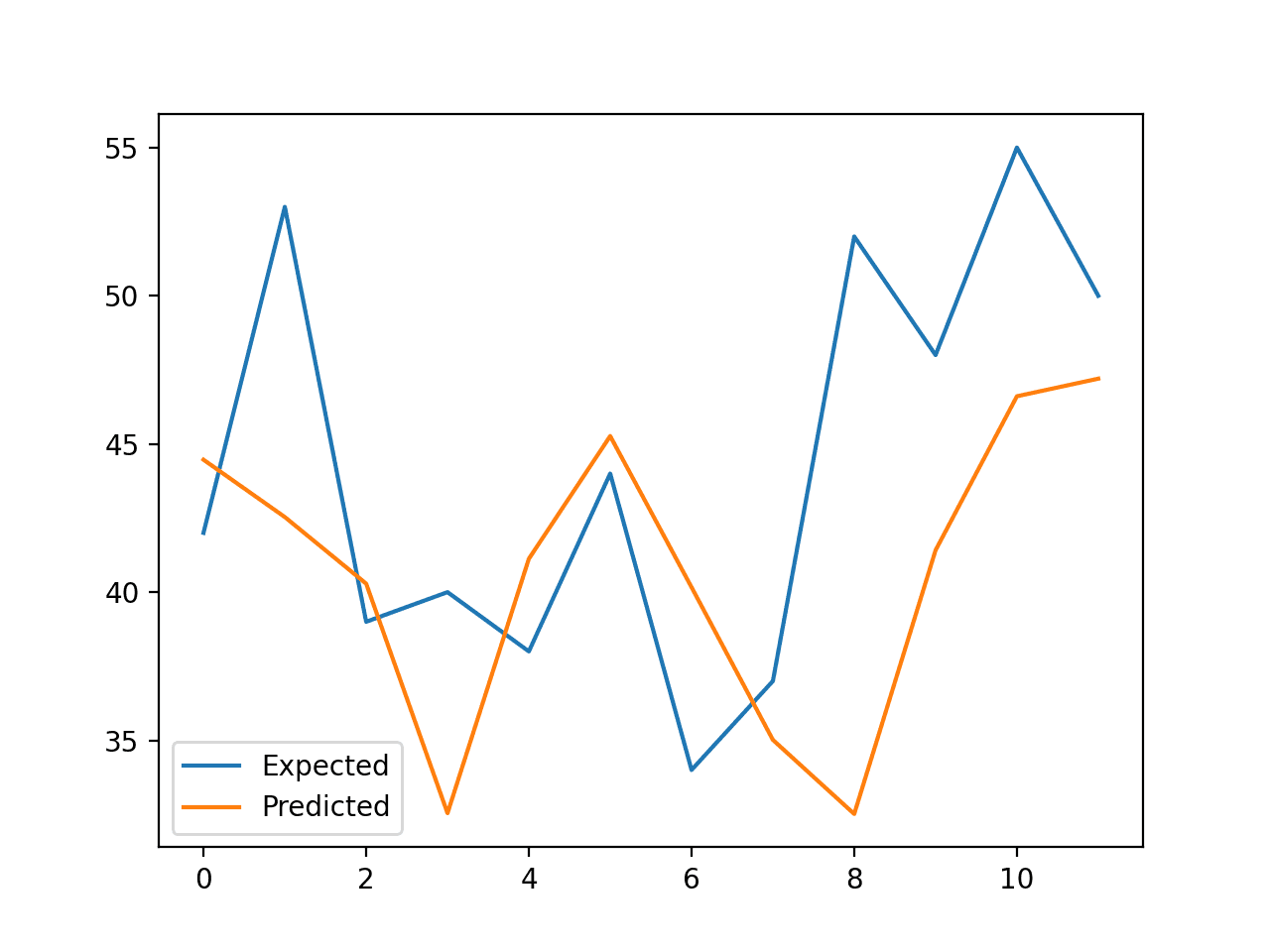

8282017 When you do transfer learning you want to freeze the first N-1N-2 layers and allow only the last layers to adjust based on new data. It would take too long to train a full new model on live data when ideally I would. 1152019 We will using XGBoost eXtreme Gradient Boosting a type of boosted tree regression algorithms.

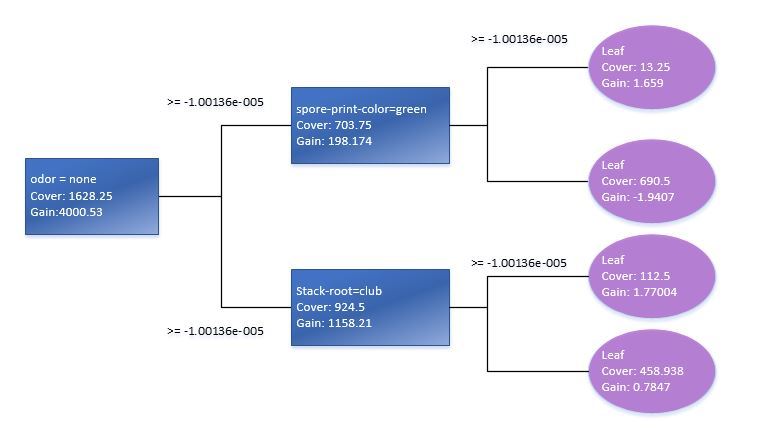

The training dataset of target well block can be constructed by transfer knowledge from source well block. XGBoost belongs to a family of boosting algorithms that convert weak learners into strong learners. F2 is boosted.

2 days ago Finally the XGBoost model with just structural descriptors successfully predicted 38 top MOFs of larger XeKr adsorption selectivity and Xe uptake than recently reported SBMOF-1 and Z11CBF-1000-2 which overcomes the defects of random forest and transfer machine learning. Erated learning and federated transfer learning. I think the same could be true of using xgboost.