Transfer Learning Xgboost

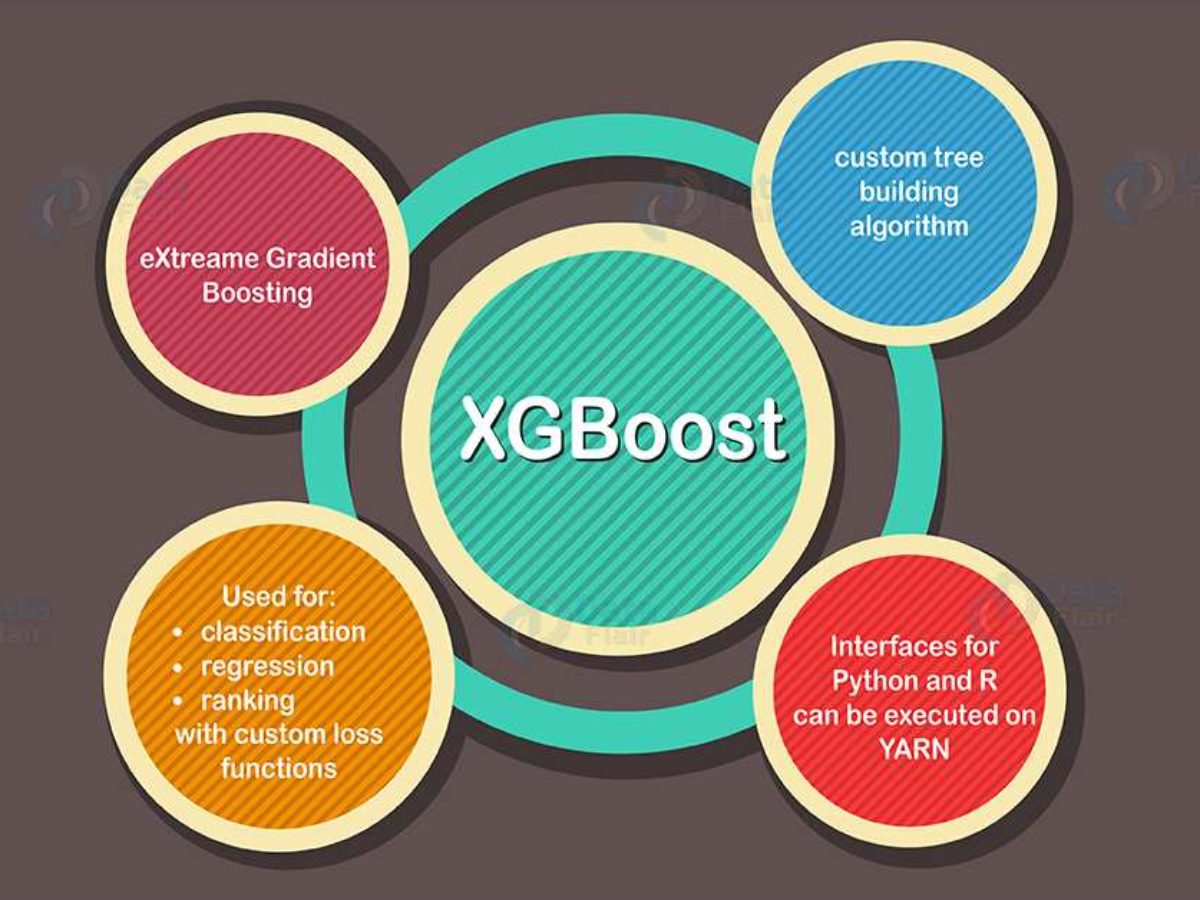

It is a decision-tree-based ensemble Machine Learning algorithm that uses a gradient boosting framework.

Transfer learning xgboost. 95 of model optimal prediction is better than no prediction. 8292020 Ensemble learning involves training and combining individual models known as base learners to get a single prediction and XGBoost is one of the ensemble learning methods. XGBoost Extreme Gradient Boosting is an optimized distributed gradient boosting library.

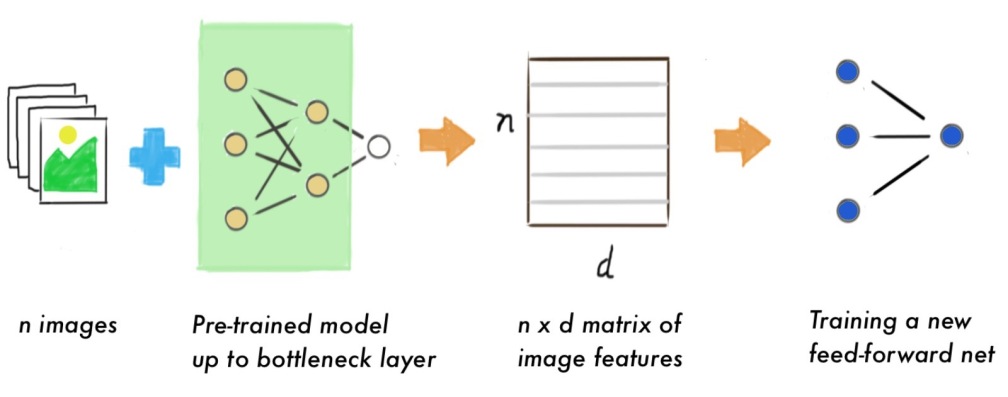

It would take too long to train a full new model on live data when ideally I would. Transfer-learning-multi-model-CNN-XGBoost-SVC Plant seedlings classification using three classifiers. We used weights from pre trained VGG19 model to take tha advantage of transfer learning to train a classification model to determine different species of seelings.

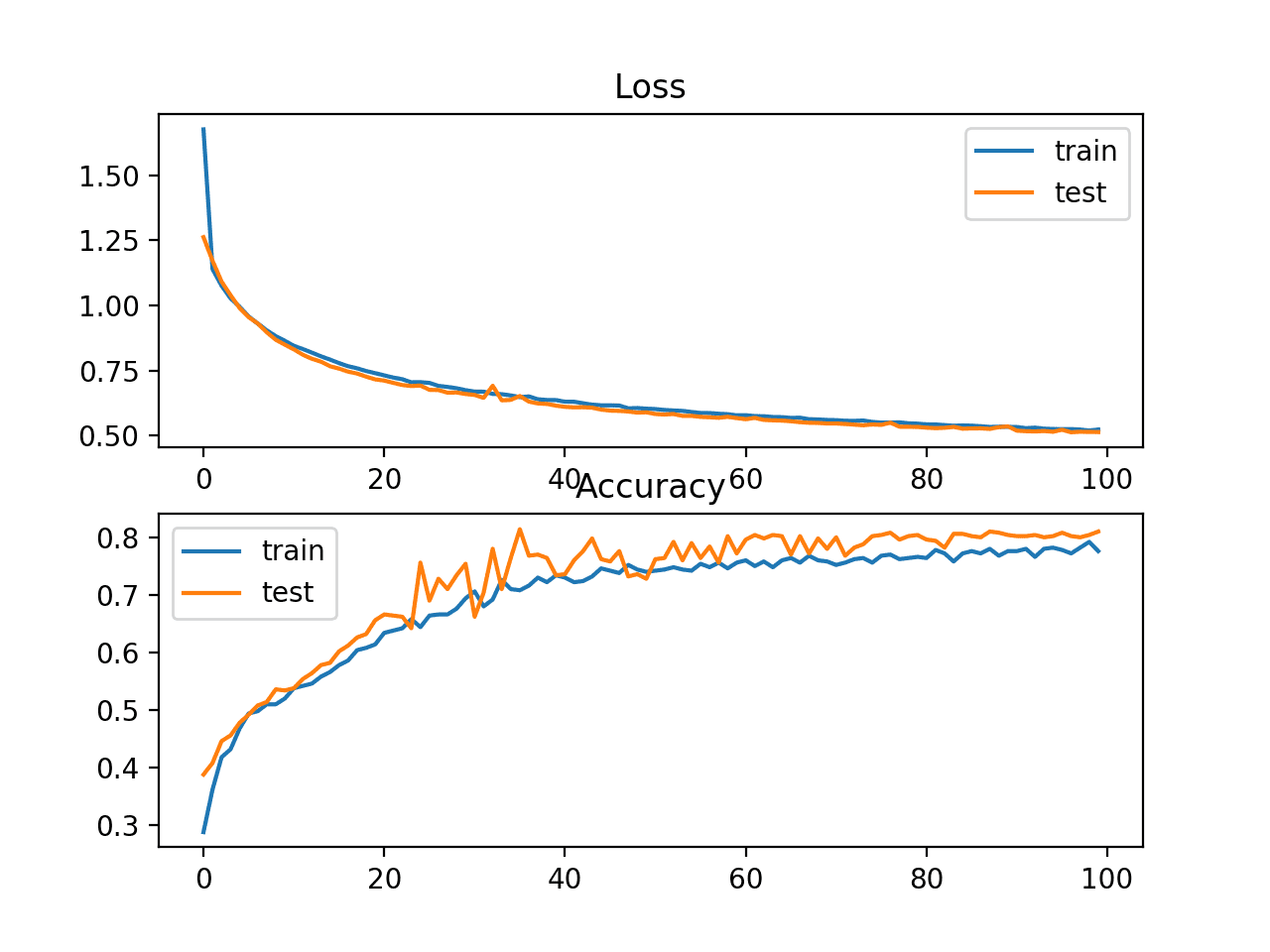

EXtreme Gradient Boosting XGBoost is a highly scalable end-to-end tree boosting system developed by Tianqi Chen and Carlos Guestrin at the University of Washington. 10162020 Via the 2D-CNN framework the transfer learning of Mobile Net shows an accuracy of 091 while the custom-constructed classifier reveals an accuracy of 089. XGBoost was created by Tianqi Chen PhD Student University of Washington.

XGBoost is used both in regression and classification as a go-to algorithm. I have only changed the parts of the code specifically related to the training and testing of the model. It is used for supervised ML problems.

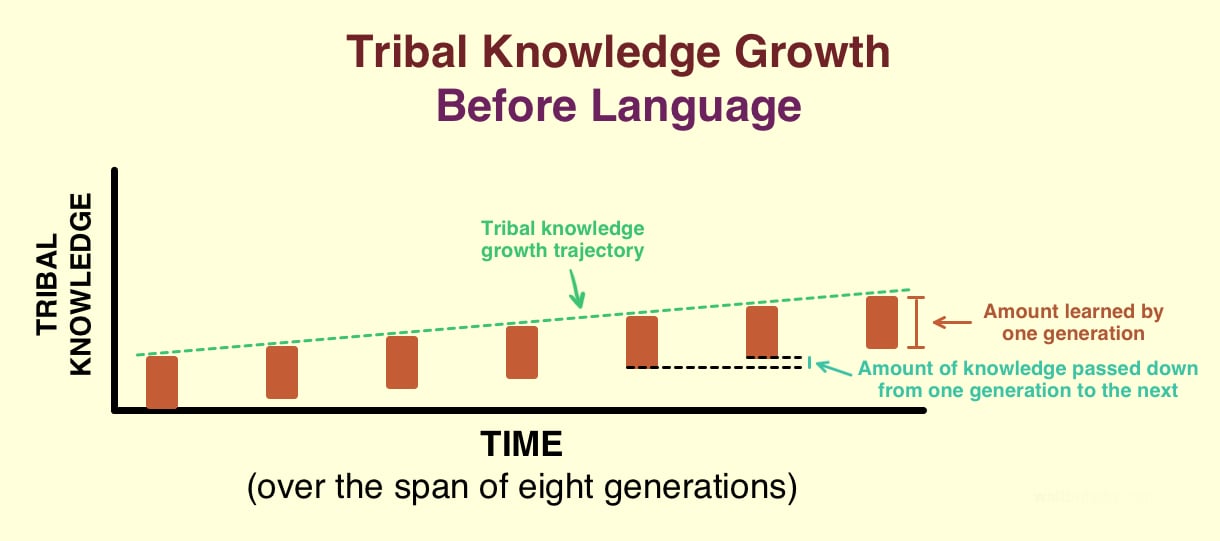

Many deep neural networks trained on images have a curious phenomenon in common. 11232019 While most machine learning is designed to address a single task the development of algorithms that facilitate transfer learning is a topic of ongoing interest in the machine-learning community. Treelite plays well with XGBoost if you used XGBoost to train your ensemble model you need only one line of code to import it.

XGBoost expects to have the base learners which are uniformly bad at the remainder so that when all the predictions are combined bad predictions cancels out and better one sums up to form. Explore and run machine learning code with Kaggle Notebooks Using data from multiple data sources. I think the same could be true of using xgboost.