Transfer Learning Zhihu

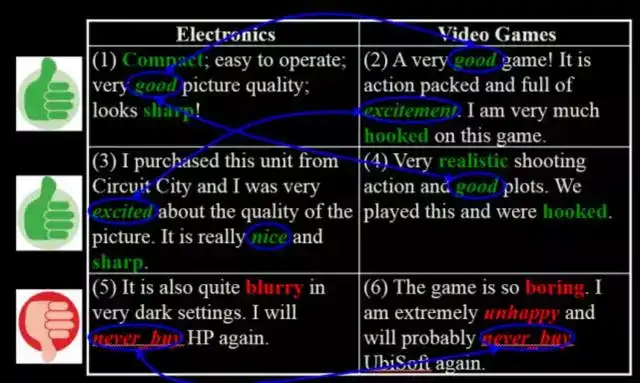

Manifold Regularized Multi-Task Learning.

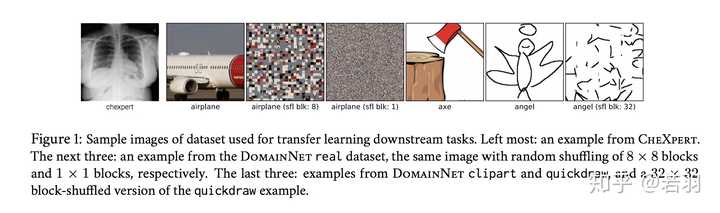

Transfer learning zhihu. Auxiliary tasks are widely used to address the lack of data by providing additional supervision in semiself-supervised learning transfer learning reinforcement learning etcAssigning the importance weights for different auxiliary tasks remains a crucial and largely understudied research question. From sklearnpreprocessing import OrdinalEncoder ordinal_encoder OrdinalEncoder housing_cat_encoded ordinal_encoder. Volume 9 Issue 9 Sep.

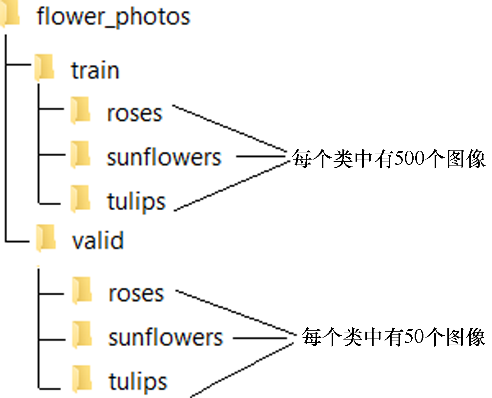

Fit_transform housing_cat. Course 4 includes new notebooks on transfer learning that uses Mobile Net as a lightweight model to fine-tune and segmentation for self-driving cars using Unet. Learning Across Domains and Tasks.

Tianshou An elegant flexible and superfast PyTorch deep reinforcement learning platform. 102020 Our work on meta imbalanced learning was accepted by NeurIPS 2020.

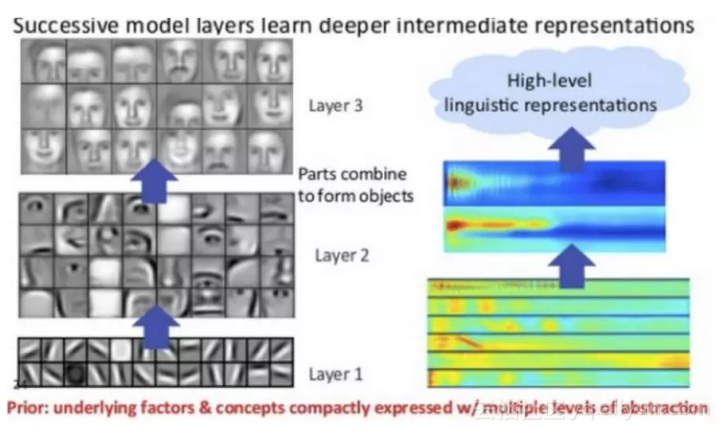

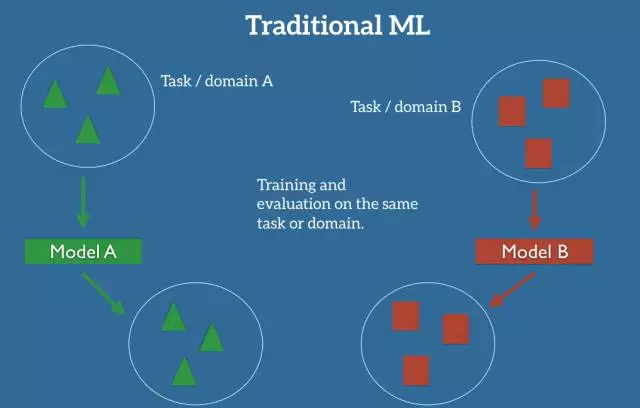

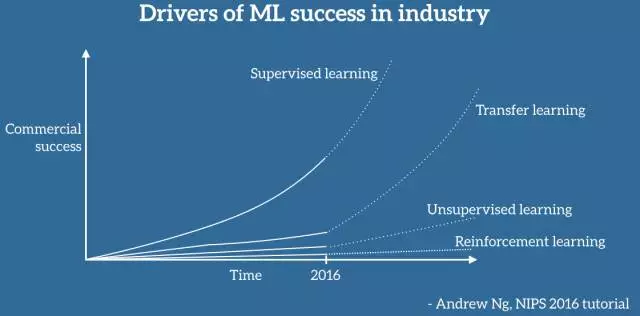

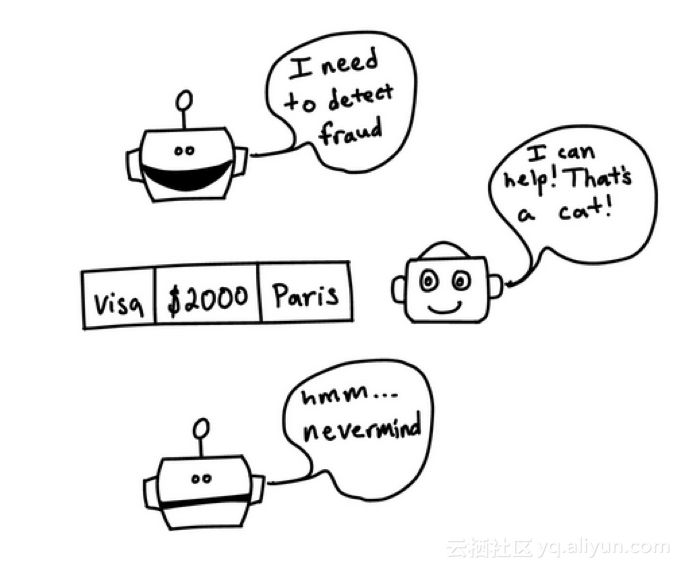

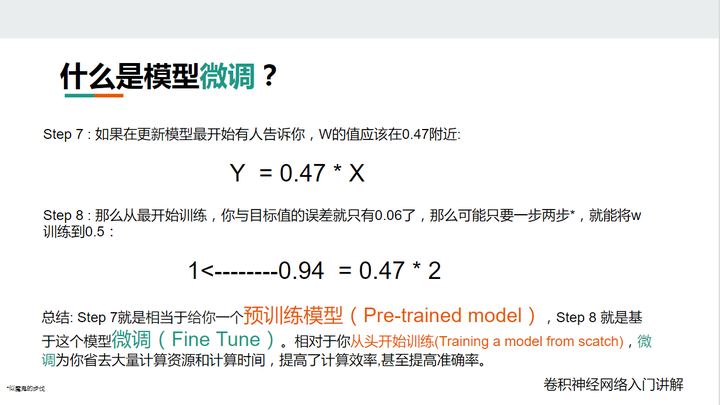

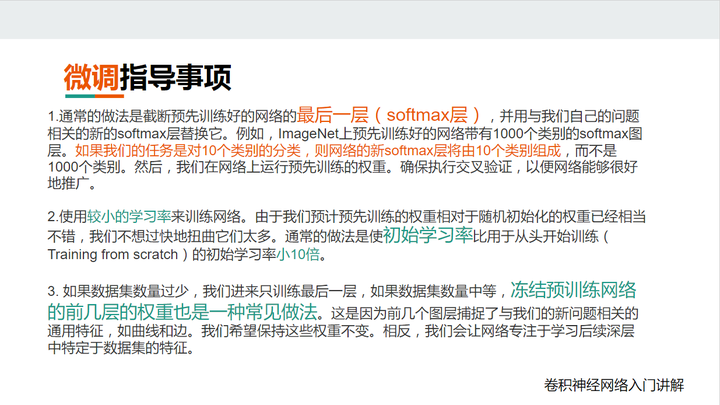

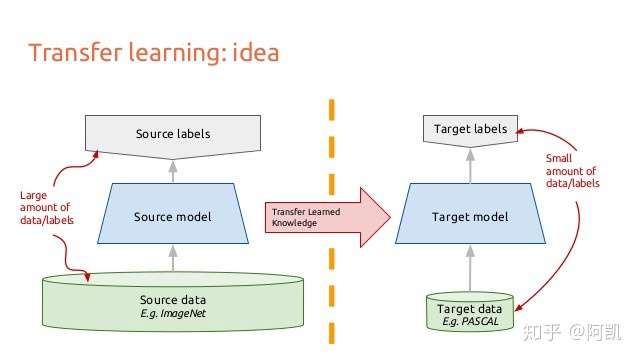

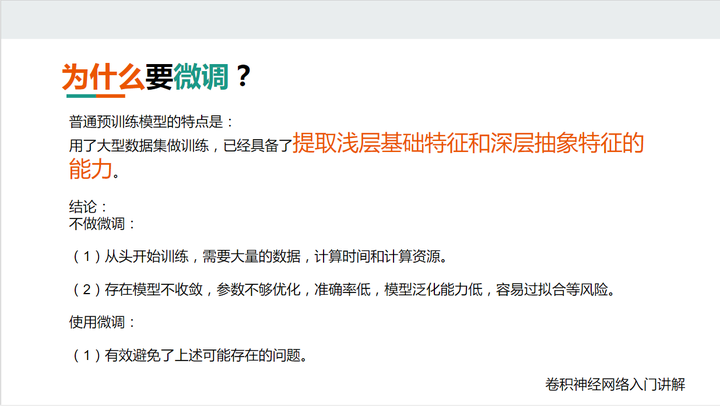

Use Git or checkout with SVN using the web URL. For this we use Scikit-Learns OrdinalEncoder class. 8212018 Its been repeatedly shown in research and practice that transfer learning helps to achieve high accuracy in a short period of time since networks trained for somewhat similar tasks discover similar weights.

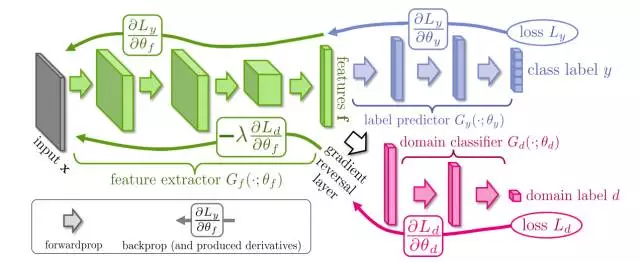

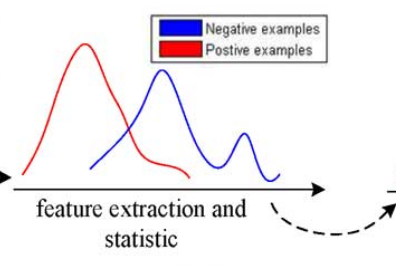

NLP Transfer Learning RL model finetuning可以算是迁移毕竟复用了之前train好的model 其他常用的迁移有feature-basedinstance-based和model-based transfer learning. Practical Assessment of Pre-trained Models for Transfer Learning arxiv Kaichao You. If nothing happens.

A Federated Transfer Learning Framework for Wearable Healthcare. Work fast with our official CLI. Learners will also get video and reading material about depth-wise separable convolutions theory Merged Convolutional Model an introduction on Keras application and learn how to use the Sequential and Functional APIs for.