Transfer Learning Batch Normalization

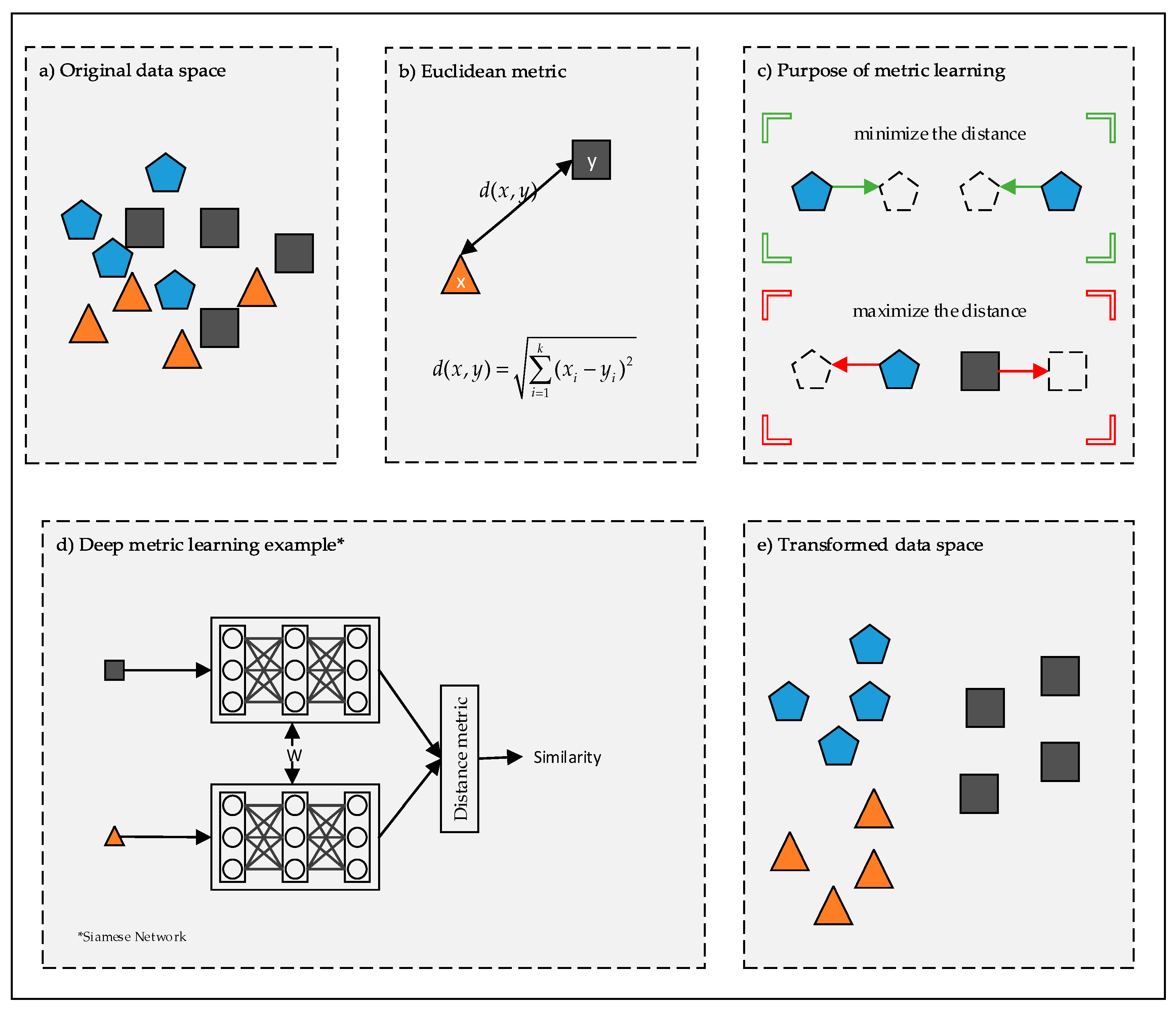

Batch Normalization aims to reduce internal covariate shift and in doing so aims to accelerate the training of deep neural nets.

Transfer learning batch normalization. 7212020 Transfer Learning for CNNs and Batch Norm Layers. 6182017 you can apply transfer learning of convolutional layer with batch norm applied. Batch Normalization Instance Normalization and Layer Normalization differ in the manner these statistics are calculated.

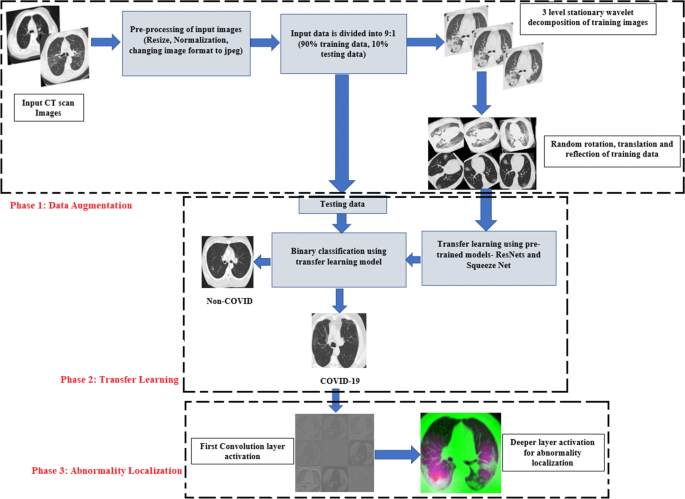

If you would like to do transfer learning on such models you can have a lot of problems. 5262020 What is Transfer Learning. 5132020 Transfer learning means we use a pretrained model and fine tune the model on new data.

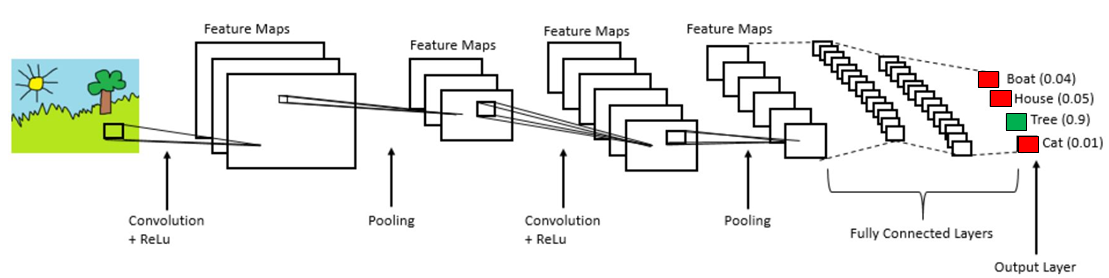

872020 Generally normalization of activations require shifting and scaling the activations by mean and standard deviation respectively. But on fully connected layers the influence of the computed value that see the whole input and not only local patches can bee too much data dependent and thus Ill avoid it. Here we will freeze the weights for all of the.

Batch Normalization also has a beneficial effect on the gradient flow through the network by. 10132019 The final classification layer is replaced with Global Average PoolingGAP Dropout and Batch-Norm followed by the Dense and Soft-Max layer. 4182018 The Batch Normalization layer was introduced in 2014 by Ioffe and Szegedy.

2222020 There are still a lot of models that use Batch Normalization layers. 1242019 Batch normalization or batchnorm for short is proposed as a technique to help coordinate the update of multiple layers in the model. However as a rule of thumb.

Is added and we re-train the model. ConvNet as fixed feature extractor. Batch normalization provides an elegant way of reparametrizing almost any deep network.